Transformer

The neural network architecture behind GPT, Claude, and every modern LLM. Built on attention.

The neural network architecture behind GPT, Claude, and every modern LLM. Built on attention.

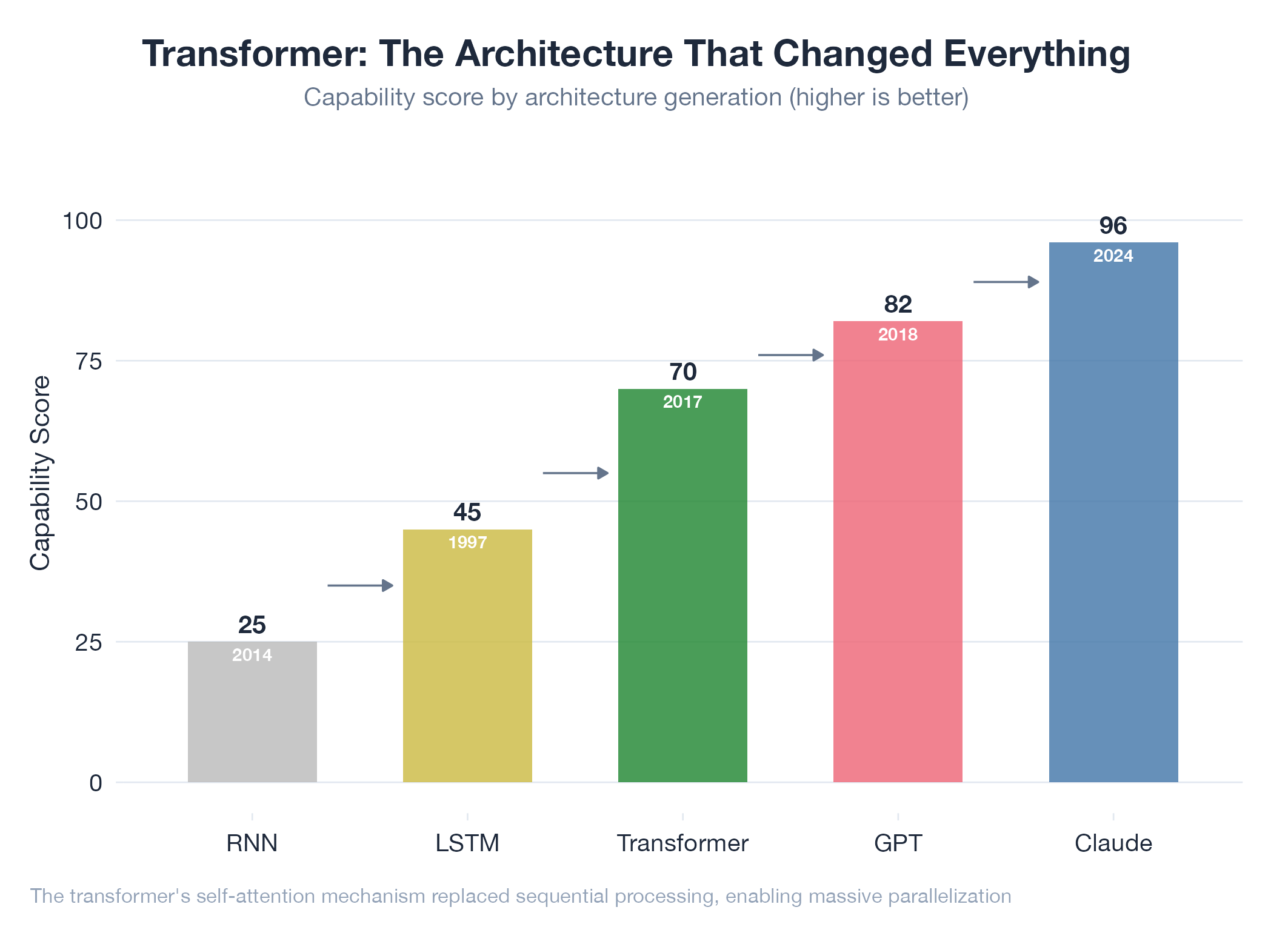

A transformer is a neural network that reads all of an input simultaneously and decides which parts matter for understanding each piece: rather than processing text sequentially word by word. The key innovation (from the 2017 paper “Attention Is All You Need”) is self-attention: for each word, the model learns how much to “attend to” every other word. This makes transformers massively parallelizable on GPUs and capable of capturing long-range dependencies. Every major LLM today: GPT-4, Claude, Gemini, Llama: is a transformer. Differences are in scale, training data, and fine-tuning, not the fundamental architecture.

How It Works

Multiple attention heads run in parallel (grammar, meaning, position). Each head produces a weighted mix of all input positions. Feed-forward layers transform representations. Stack 96+ of these blocks and train on enough text and the model learns to reason. Context windows are limited because attention is O(n²) in sequence length.

Example

The lab uses transformer-based models across every project: Claude for analysis and code, DeepSeek for reasoning, Gemini for vision. Understanding the architecture explains why hallucinations happen (attention finds plausible patterns, not verified facts), why token limits matter (quadratic attention cost), and why fine-tuned specialists beat generalists on narrow tasks.