Token

How AI reads text: not words, but chunks. A token is roughly 3/4 of a word on average.

How AI reads text: not words, but chunks. A token is roughly 3/4 of a word on average.

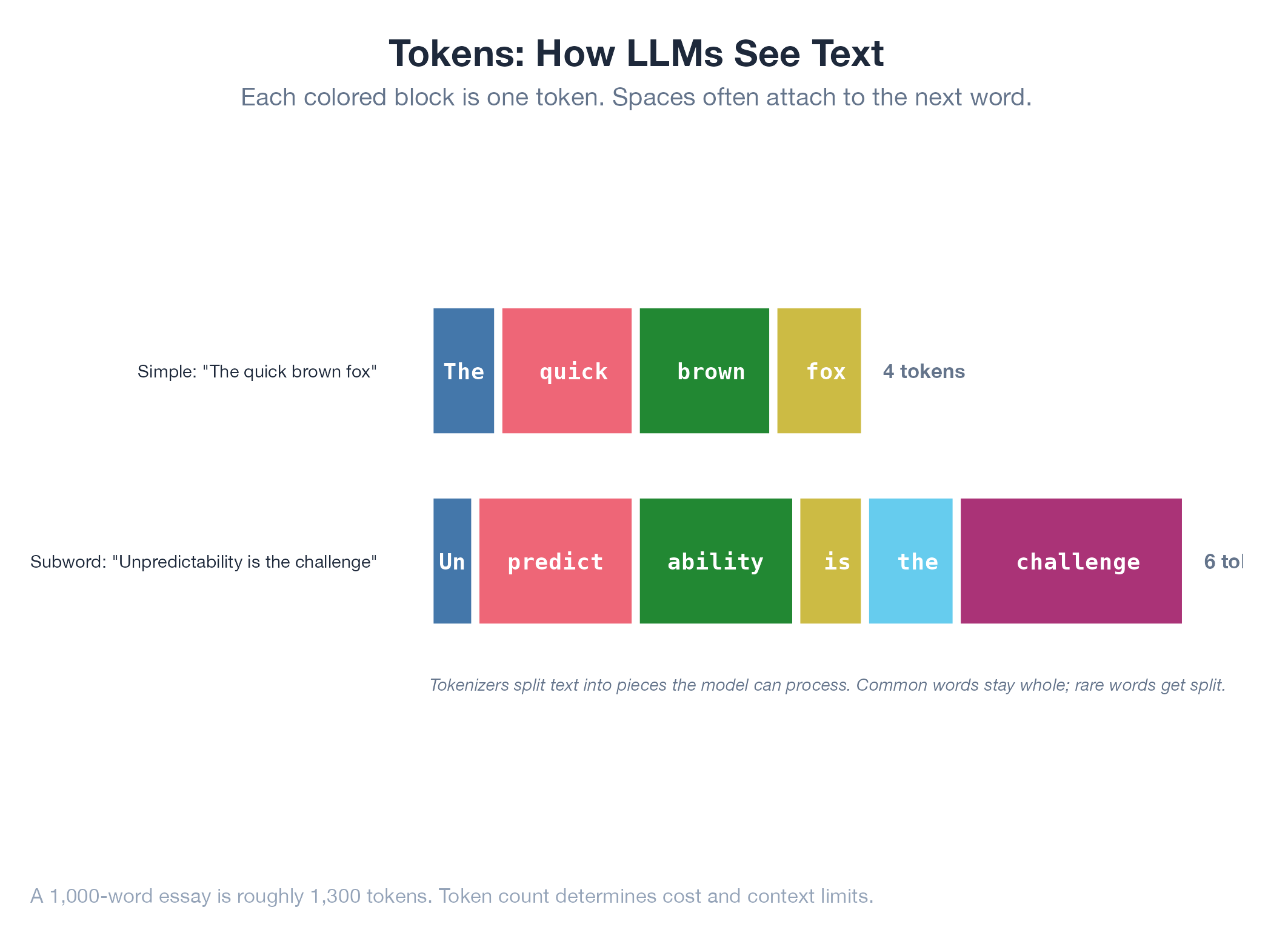

AI models don’t read text word by word: they break it into “tokens,” which are chunks that might be whole words, parts of words, or single characters. “Understanding” might become “under” + “standing.” “The” is one token. An unusual word might split into four. The model has a fixed vocabulary of 32,000–100,000 such pieces, and every input is built from them. Roughly 1 token ≈ 3/4 of an English word ≈ 4 characters. Tokens are why context windows are measured in token counts (128K, 1M) and why API pricing is per-token.

How It Works

Build a vocabulary of common subword units from a large corpus (BPE or SentencePiece algorithm) → split any input into those units → each unit maps to a numerical ID the model processes. Numbers tokenize digit by digit, which is one reason LLMs struggle with arithmetic.

Example

The candidate match report orchestrates four parallel agents, each consuming tokens for analysis. The J-Score prompt engineering minimizes token usage while maximizing scoring accuracy: trimming from 800 to 400 tokens per candidate roughly halved API costs with no accuracy drop. Transformers process all tokens in parallel, making context size the main cost driver.