RMSE (Root Mean Square Error)

Average prediction error that penalizes big misses more than small ones. Lower is better.

Average prediction error that penalizes big misses more than small ones. Lower is better.

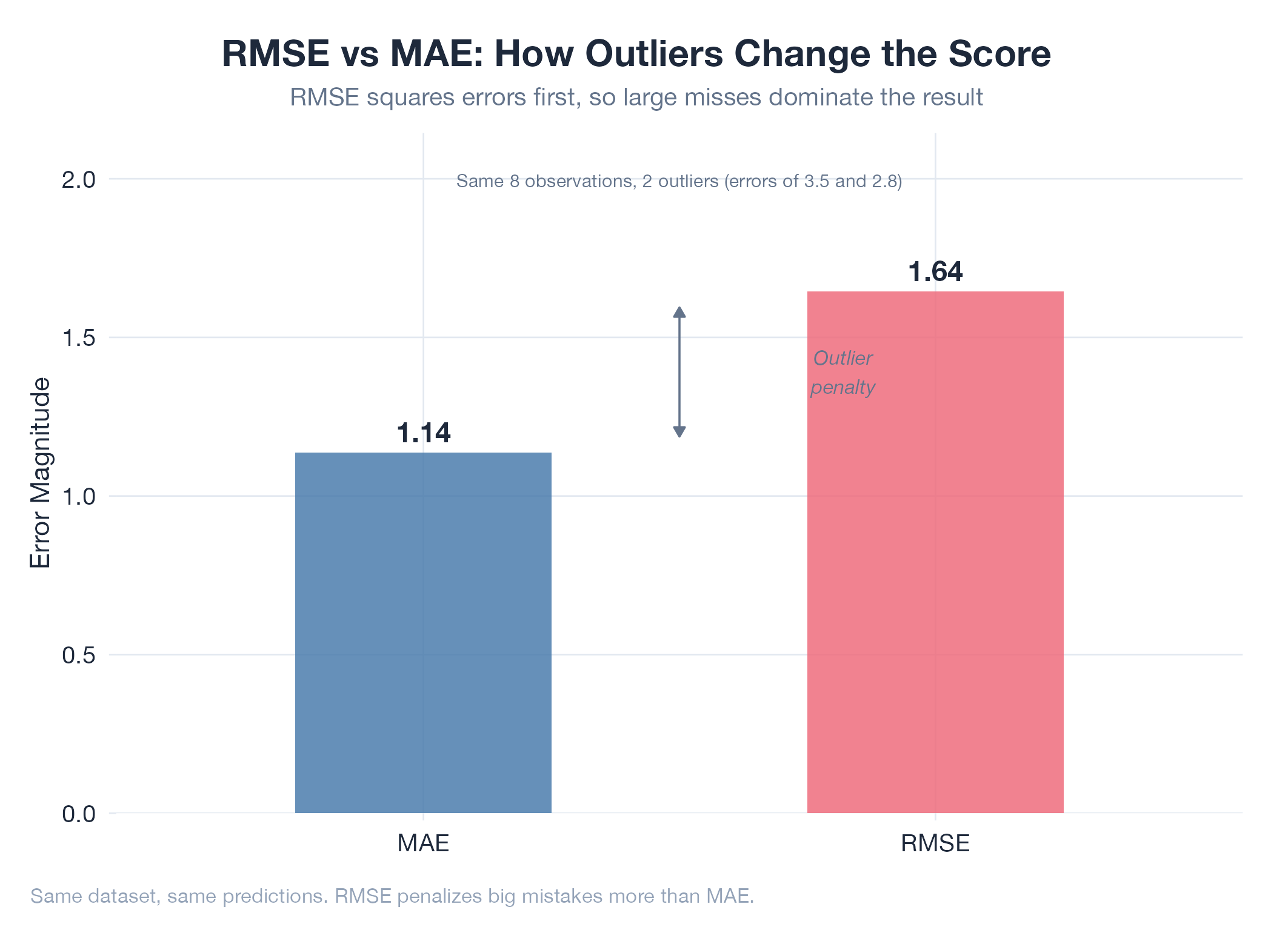

RMSE measures prediction error with a deliberate bias toward large misses. Square each error, average them, then take the square root. The squaring step amplifies outliers: one terrible prediction drags your score down much more than several small misses would. This makes RMSE higher than MAE whenever your errors are inconsistent. If RMSE ≫ MAE, you have outlier predictions worth investigating. If RMSE ≈ MAE, your errors are uniform across all predictions.

How It Works

RMSE = √(mean((predicted − actual)²)). Units match your target variable. Compare RMSE to MAE: if they’re similar, errors are consistent; if RMSE is much larger, you have outlier failures.

Example

During Oil v16 forward testing, RMSE revealed something MAPE missed: while average percentage error was 2.26%, a handful of sessions with geopolitical spikes produced outsized errors that dragged RMSE up. This led directly to recalibrating Monte Carlo disruption parameters. Tracked in Oil v16 Sell Model.