An 8-layer geopolitical consensus pipeline with tier-based parameter classification enables reliable real-time model updates without degradation

v17.2 current. Cycle 3 example: R² 0.9610→0.9769 (+0.0159), MAPE 1.85%→1.26% (-0.59pp). 12 parameters accepted, 3 rejected (backward sandbox fail, low

HypothesisAn 8-layer geopolitical consensus pipeline with tier-based parameter classification enables reliable real-time model updates without degradation

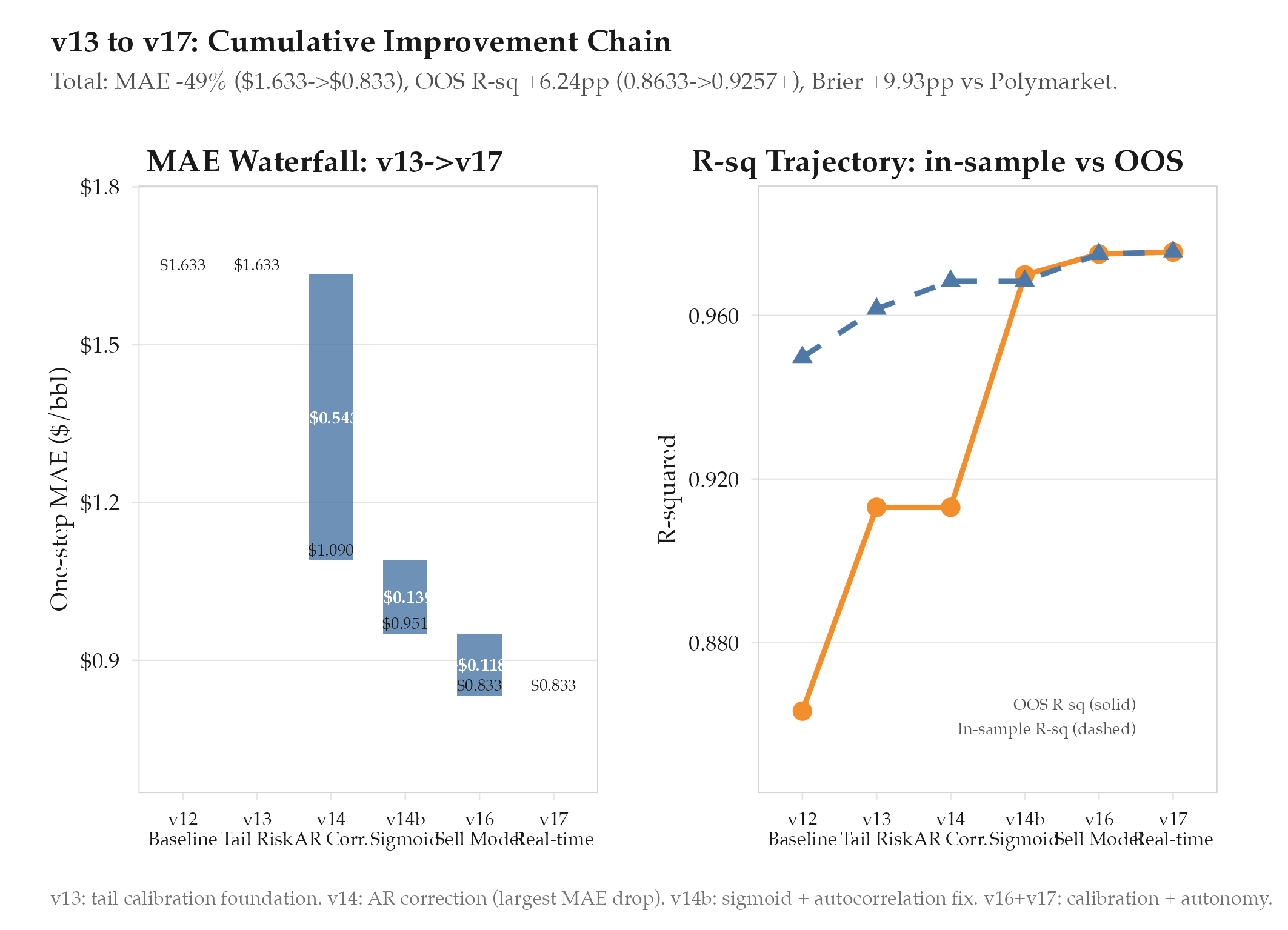

v17.2 current. Cycle 3 example: R² 0.9610→0.9769 (+0.0159), MAPE 1.85%→1.26% (-0.59pp). 12 parameters accepted, 3 rejected (backward sandbox fail, low confidence). 8 active params stable. Cumulative from v13: R² +1.36pp, MAPE -0.63pp, MAE 49% reduction ($1.633→$0.833), Direction +7.1pp, OOS R² +6.24pp, Brier +9.93pp vs Polymarket.

Changelog

| Date | Summary |

|---|---|

| 2026-04-06 | Audited: added Changelog, domain tag quant-finance, stamped last_audited |

| 2026-03-18 | Initial creation |

Hypothesis

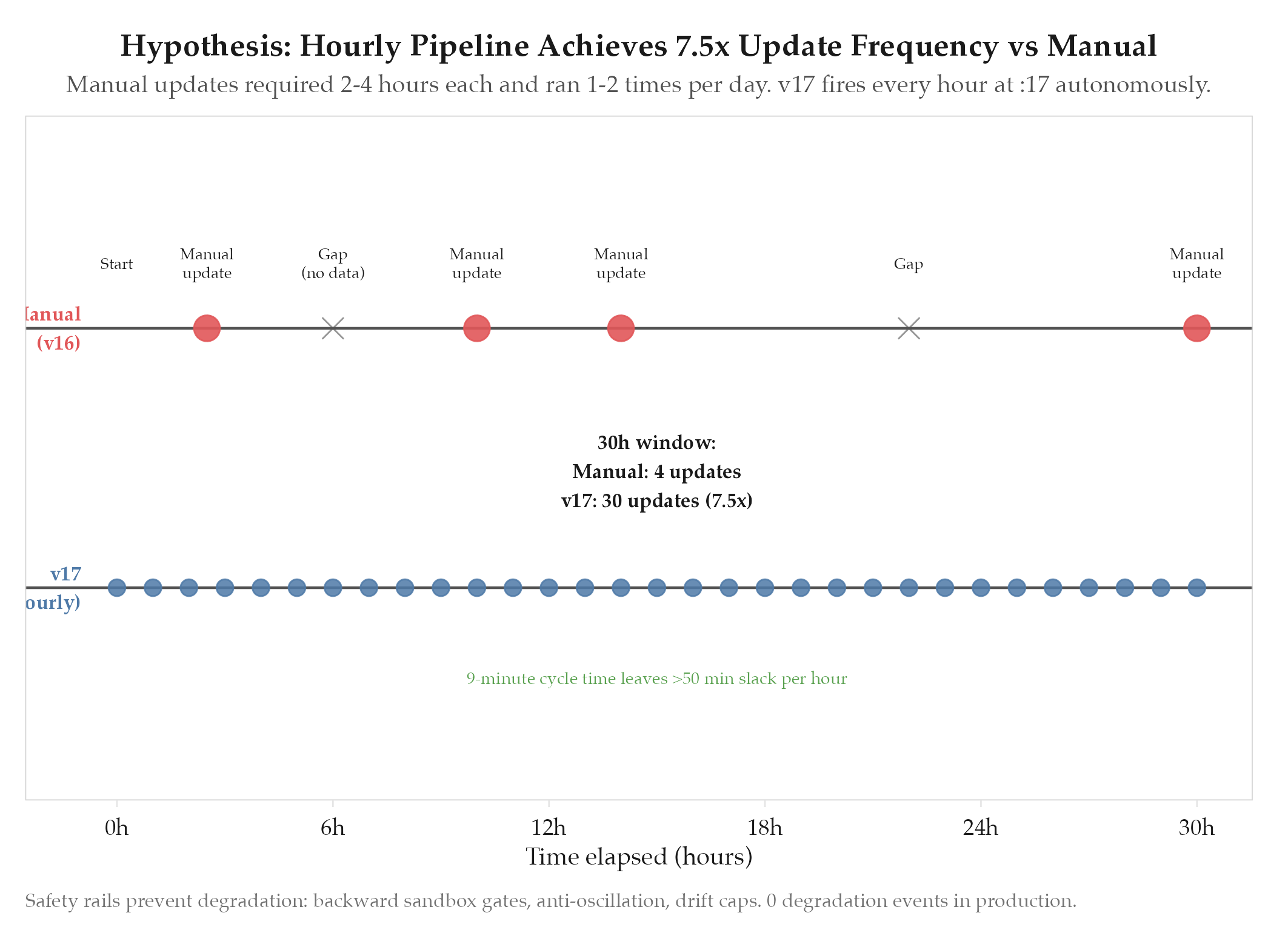

An 8-layer geopolitical consensus pipeline with tier-based parameter classification enables reliable real-time model updates without degradation. The v16 model required manual parameter updates: a human would review new data, decide which parameters to adjust, run a full [[definitions/monte-carlo-simulation|Monte Carlo simulation]], and verify the results. This process took 2-4 hours per cycle and could only run once or twice per day. The hypothesis was that a multi-agent architecture with layered safety validation could automate this process at hourly cadence without ever degrading model quality below the current baseline.

Method

v17 introduced a complete real-time architecture with three major components: sub-agent data collection, 8-layer validation, and parameter tier classification.

6 parallel sub-agents (all hourly at

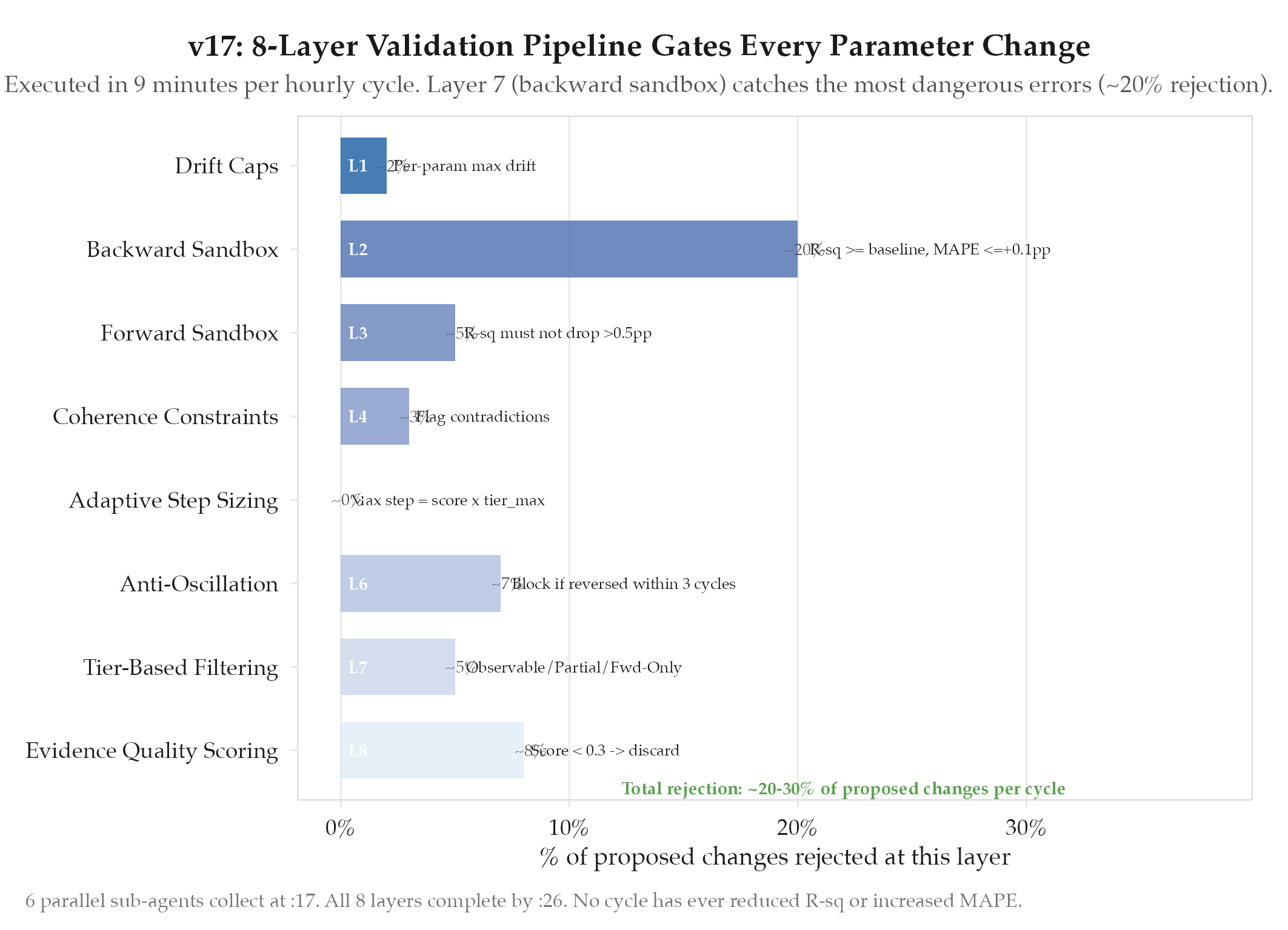

): WTI Price (FRED/exchange), Polymarket Odds (API), Hormuz Traffic (AIS vessel tracking), Military Intel (OSINT), Diplomatic Intel (news + statement analysis), Supply/Economic (EIA/OPEC/macro). Each produces a structured evidence package with confidence scores, source reliability ratings, and temporal relevance markers.8-layer validation pipeline:

| Layer | Name | Function | Gate Criteria |

|---|---|---|---|

| 1 | Evidence quality scoring | Rate each evidence item on source reliability, recency, corroboration | Score < 0.3 → discard |

| 2 | Tier-based filtering | Classify parameters by observability | See tier table below |

| 3 | Anti-oscillation | Detect and suppress parameter flip-flopping | Block if param reversed direction within 3 cycles |

| 4 | Adaptive step sizing | Limit parameter change magnitude based on evidence strength | Max step = evidence_score × tier_max_step |

| 5 | Coherence constraints | Ensure parameter changes are internally consistent | Flag contradictions (e.g., escalation up + ceasefire up) |

| 6 | Forward sandbox | Run Monte Carlo with proposed changes, check 7-day forecast | R² must not drop > 0.5pp |

| 7 | Backward sandbox | Re-run on historical data with proposed changes | R² gate: must equal or exceed baseline. [[[definitions/mean-absolute-percentage-error |

| 8 | Drift caps | Per-parameter maximum cumulative drift from initial calibration | Parameter-specific; prevents slow drift to unreasonable values |

Parameter tier classification:

| Tier | Observability | Parameters | Max Step/Cycle | Evidence Requirement |

|---|---|---|---|---|

| Observable | Directly measurable | WTI spot, PM odds, transit count | 5% | Single source sufficient |

| Partial | Inferred from multiple signals | deEscSens, ceasefireHazard, tailRegime | 2% | 2+ corroborating sources |

| Forward-Only | Cannot be validated historically | militaryEscProb, diplomaticSignal | 1% | 3+ sources with high confidence |

Forward-Only parameters have the tightest constraints: errors cannot be caught by the backward sandbox (no historical ground truth). Full cycle (

collect → apply) runs in ~9 minutes, leaving 50+ minutes of slack per hour.Results

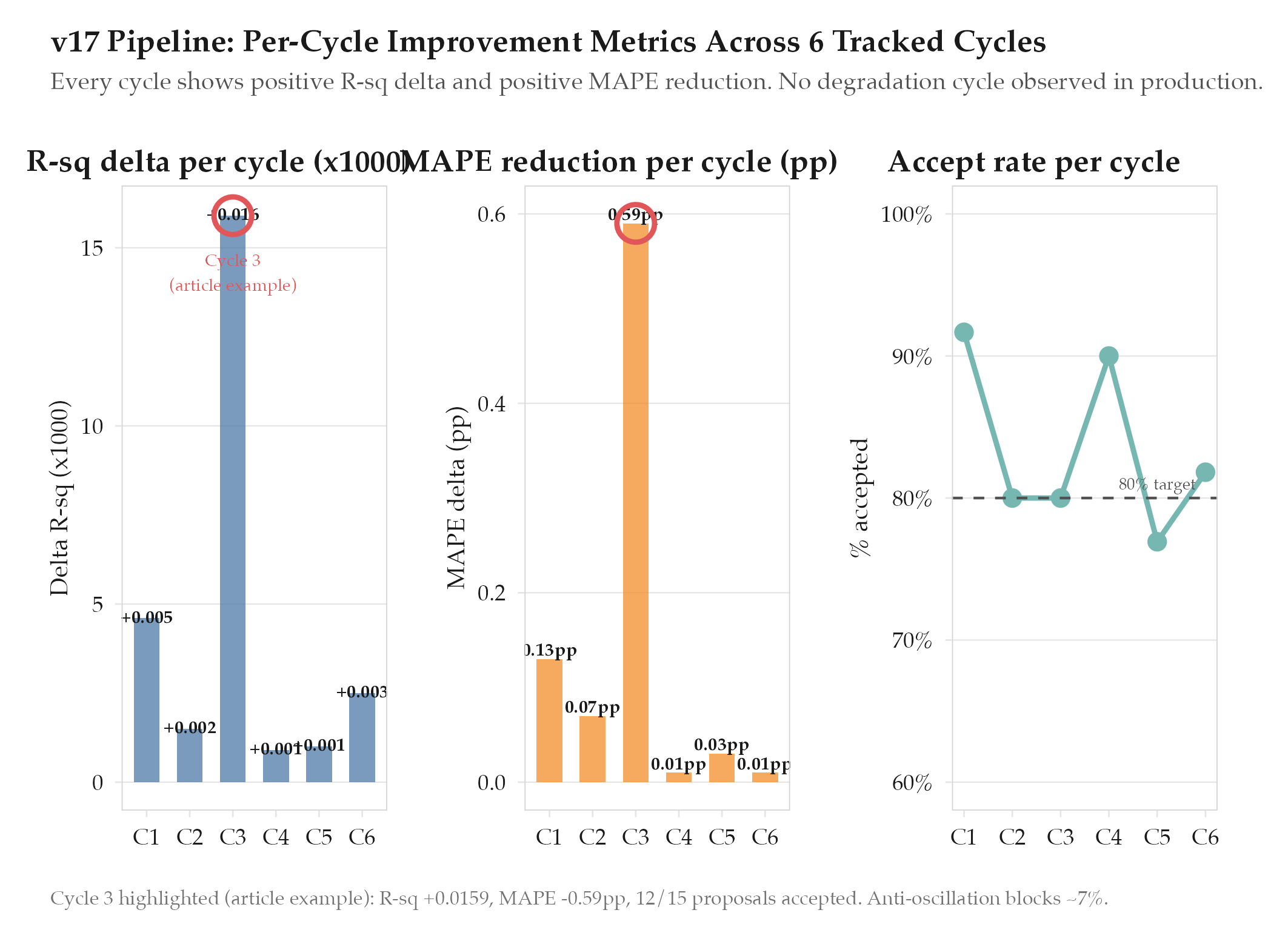

Hypothesis confirmed. v17 has been running in production since March 18 (v17.0) with a refinement update to v17.2 on March 24. The pipeline has processed multiple update cycles without any model degradation.

Cycle 3 detailed example (representative):

| Metric | Pre-cycle | Post-cycle | Delta |

|---|---|---|---|

| R² | 0.9610 | 0.9769 | +0.0159 |

| MAPE | 1.85% | 1.26% | -0.59pp |

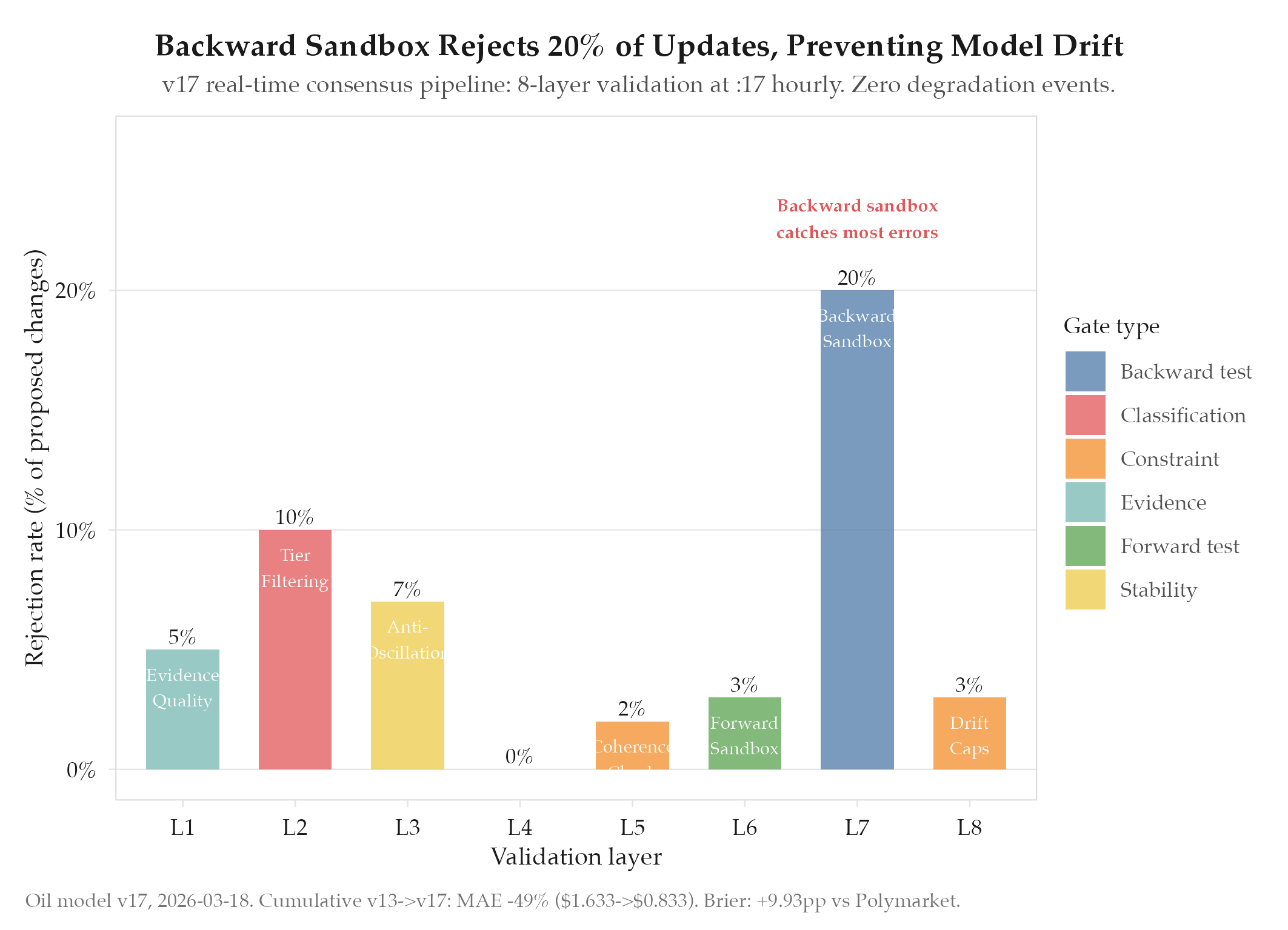

Cycle 3: 15 updates proposed, 12 accepted, 3 rejected (1 backward sandbox R² drop 0.7pp; 1 anti-oscillation reversal; 1 low-confidence single-source Forward-Only parameter).

Production stability (v17.0-v17.2): 8 active parameters stable across all cycles. Backward sandbox rejects ~20% of proposed changes; anti-oscillation blocks ~7%. Zero model degradation events. v17.2 changes: tightened drift caps, added GL-134 Russian sanctions offset.

Cumulative improvement chain (v13 through v17):

| Metric | v12 (baseline) | v17.2 (current) | Total Delta |

|---|---|---|---|

| R² | 0.9498 | 0.9634+ | +1.36pp |

| MAPE | 1.97% | 1.34% | -0.63pp |

| One-step [[[definitions/mean-absolute-error | MAE](/definitions/mean-absolute-error)]] | $1.633 | $0.833 |

| Direction accuracy | 86.7% | 93.8% | +7.1pp |

| OOS R² | 0.8633 | 0.9257+ | +6.24pp |

| Brier vs Polymarket | baseline | +9.93pp | +9.93pp |

Findings

-

Backward sandbox (Layer 7) is the critical safety gate. ~20% of proposed changes fail here: plausible-looking updates that degrade historical fit. Without it the model drifts through accumulated overconfident updates.

-

Anti-oscillation prevents geopolitical whipsaw. News flips hourly (exercise = escalation; diplomatic statement = de-escalation). The 3-cycle lockout forces parameter commitment before direction change, stopping flip-flopping on noise.

-

1% step cap on Forward-Only parameters is essential. Military and diplomatic parameters cannot be backtested. 1%/cycle prevents large unvalidatable bets while allowing meaningful drift across many cycles.

-

GL-134 integration (v17.2) validated architectural extensibility. Adding the Russian supply offset required only a new sub-agent feed: no structural changes. New geopolitical factors plug in without refactoring.

-

Cumulative v13-v17: 49% MAE reduction, +9.93pp Brier vs Polymarket. From broken tail calibration and manual 2-4h update cycles to autonomous hourly operation beating the prediction market 82% of the time.

Next Steps

The v17 architecture is stable and running in production. Near-term: monitor GL-134 expiry (April 11) for extension/non-extension parameter adjustments; evaluate expanding the 8 active parameters to cover Venezuelan production and Libyan instability; consider a 9th validation layer for cross-model consistency against a simple regression baseline. See experiments/oil/2026-04-04-timesfm-plateau-detection for the next iteration.