Brier Score

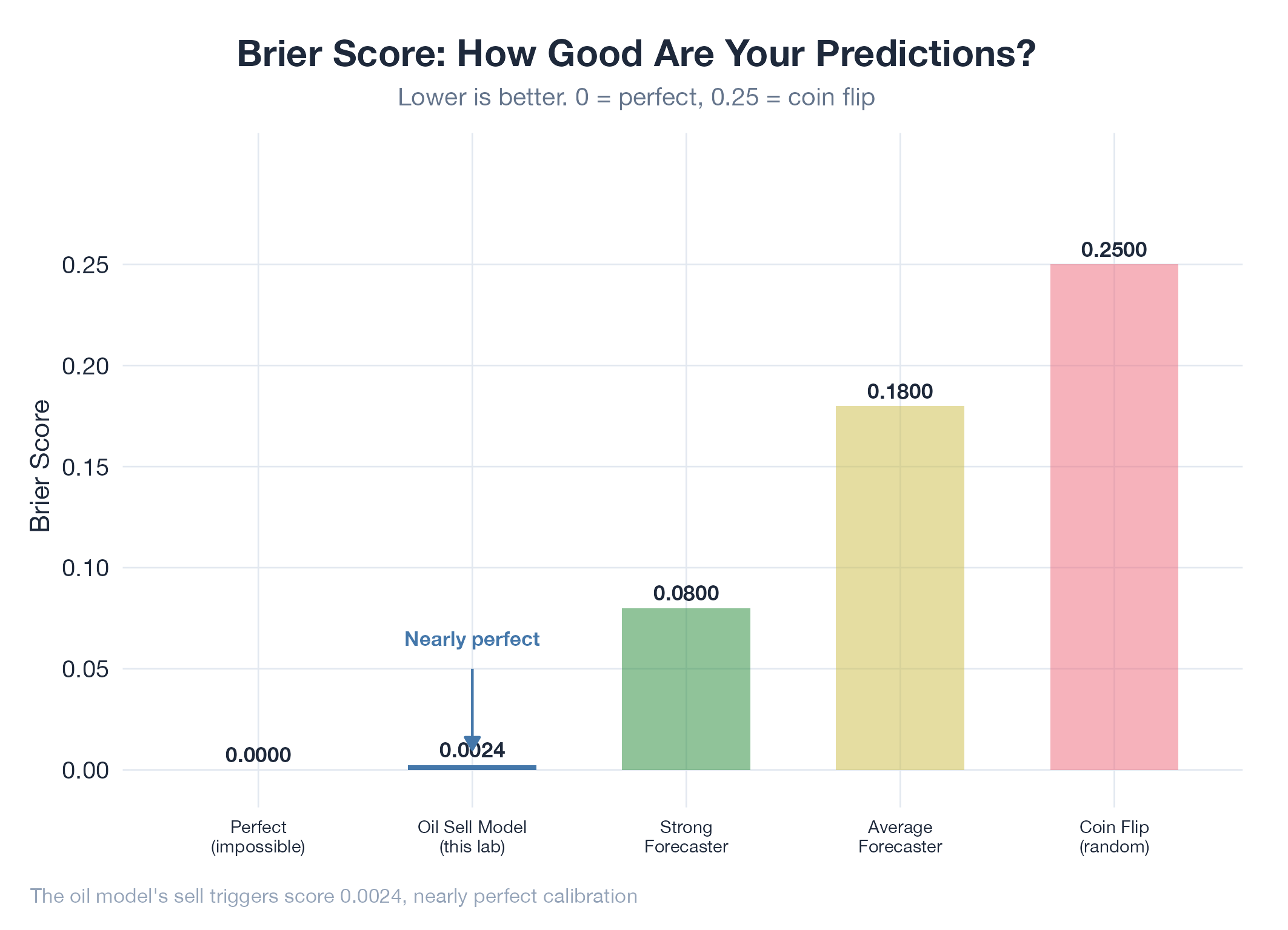

A measure of how good your predictions are. 0 is perfect, 0.25 is coin-flip guessing.

A measure of how good your predictions are. 0 is perfect, 0.25 is coin-flip guessing.

The Brier score measures prediction calibration: across all your predictions, how close were your confidence levels to reality? Take every prediction, square the difference between your stated probability and the actual outcome (0 or 1), and average across all predictions. A score of 0.00 is perfect; 0.25 means you did no better than guessing. Crucially, squaring punishes confident wrong predictions: saying “95% chance” and being wrong hurts far more than saying “55% chance” and being wrong.

How It Works

Brier = mean((predicted_probability - actual_outcome)²). Scores under 0.10 indicate strong forecasting. Professional prediction-market traders typically score 0.15–0.20.

Example

The oil model’s sell triggers scored a Brier of 0.0024: nearly perfect calibration: compared to Polymarket’s consensus of ~0.17. That gap of +2.16 percentage points means a laptop model outperformed thousands of traders with real money at stake. Detailed in Oil v17 Realtime Consensus.