Parameter Tuning

Adjusting the knobs on your model to find the best settings. Tuning explores; ratchets only go up.

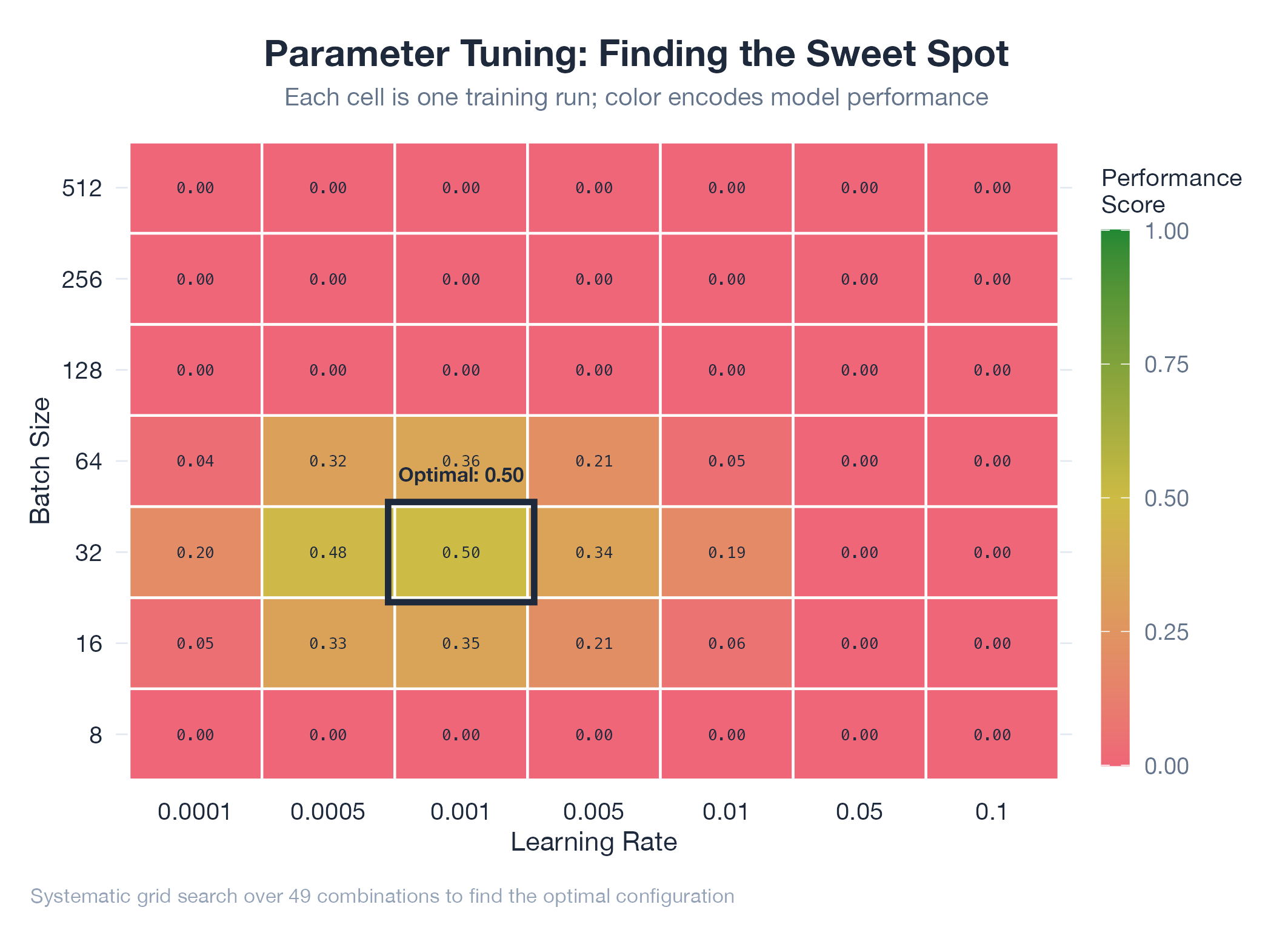

Parameter tuning finds the combination of configurable settings where a model performs best. Unlike a Karpathy ratchet, tuning explores freely : you might accept a temporarily worse configuration while searching for a globally better one. Tuning is the exploration phase; the ratchet is the exploitation phase that locks in gains. Common strategies: grid search (exhaustive, guaranteed coverage for small spaces), random search (surprisingly effective in high dimensions), and Bayesian optimization (uses previous results to guide next trial). The hard part: parameters interact, so one-at-a-time tuning often misses the best combination.

How It Works

Identify parameters → define search space (a range per parameter) → choose search strategy → evaluate each configuration → validate winner on held-out data (prevents overfitting to the tuning set).

Example

The oil model’s v14b optimization swept 9 parameters: MC sample count, time window, AR correction weights, scenario probabilities, decay rates. Over 200 configurations tested on 90 days of WTI data. Sweet spot: 360-day time window, 10,000 samples. Seven parameters locked in via ratchet. Documented implicitly across the Oil v16 work.