Karpathy Ratchet

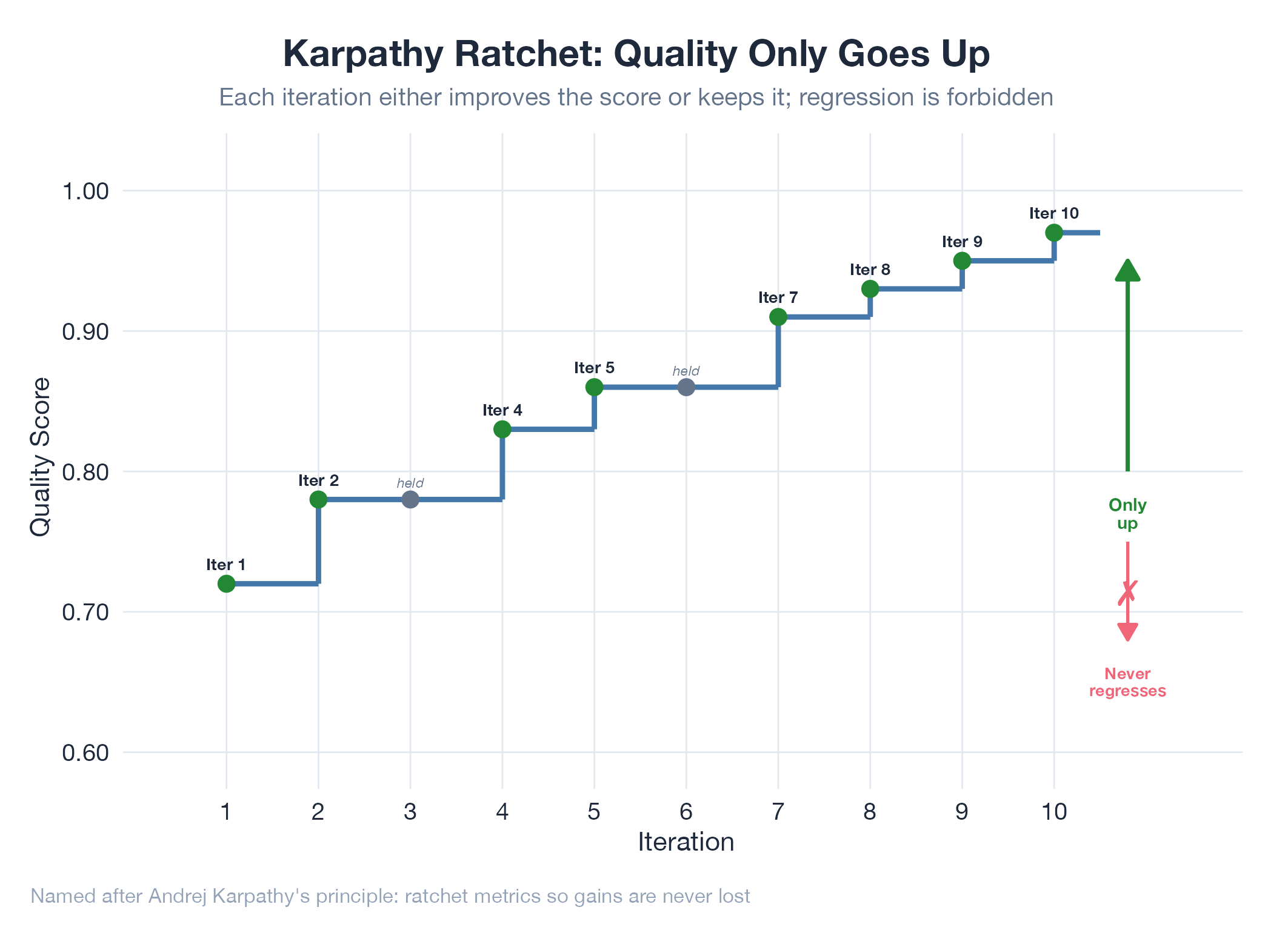

A quality metric that only goes up, never down. Each iteration must beat the previous best.

A quality metric that only goes up, never down. Each iteration must beat the previous best.

A Karpathy ratchet is a rule: your quality metric must beat the all-time best, or the change gets rejected. No exceptions. Like a rock climber clipping into new anchors: every gain locks in; slips can only fall back to the last anchor, not the ground. Named after Andrej Karpathy’s philosophy of iterative improvement in ML. Use it when you have a single measurable metric, regression is unacceptable, and the team is tempted to ship changes without measuring impact. Avoid it when you’re exploring radically different approaches (it traps you in local optima) or when the metric is noisy.

How It Works

Measure current state → experiment with a change → measure again → accept only if new score > all-time best → repeat. Compare against all-time best, not just last iteration, to prevent oscillation.

Example

Three projects used the ratchet: internal audit DQI went from 0.9049 → 0.9790 across 20 iterations. LinkedIn submission rate went from 0% → 100% across 10 runs. Oil model MC parameters were ratcheted over 34 days of forward testing. Mechanics explored in Ratchet Threshold Exploration.

Related

- Ratchet Threshold Exploration

- Baseline

- Ralph Loop

- DQI

- Iteration Objective: IO-2 (Metric-Ratcheting)

- Goal-Seeking Loop: contrast: IO-1 (goal, not metric)