Red Team Testing

Hire someone to attack your system on purpose. Find vulnerabilities before a real attacker does.

Hire someone to attack your system on purpose. Find vulnerabilities before a real attacker does.

Red team testing puts an adversarial team on your side: their job is to find every way your system fails, not confirm that it works. Named from military war games where the red team plays the enemy. For software: SQL injection, authentication bypass, API abuse. For AI: prompt injection, jailbreaking, data poisoning, adversarial inputs that produce harmful outputs. The fundamental asymmetry of security: defenders must protect every entry point, attackers only need one: makes proactive red teaming essential. Every vulnerability found by your red team is one that won’t be found by someone with actual malicious intent.

How It Works

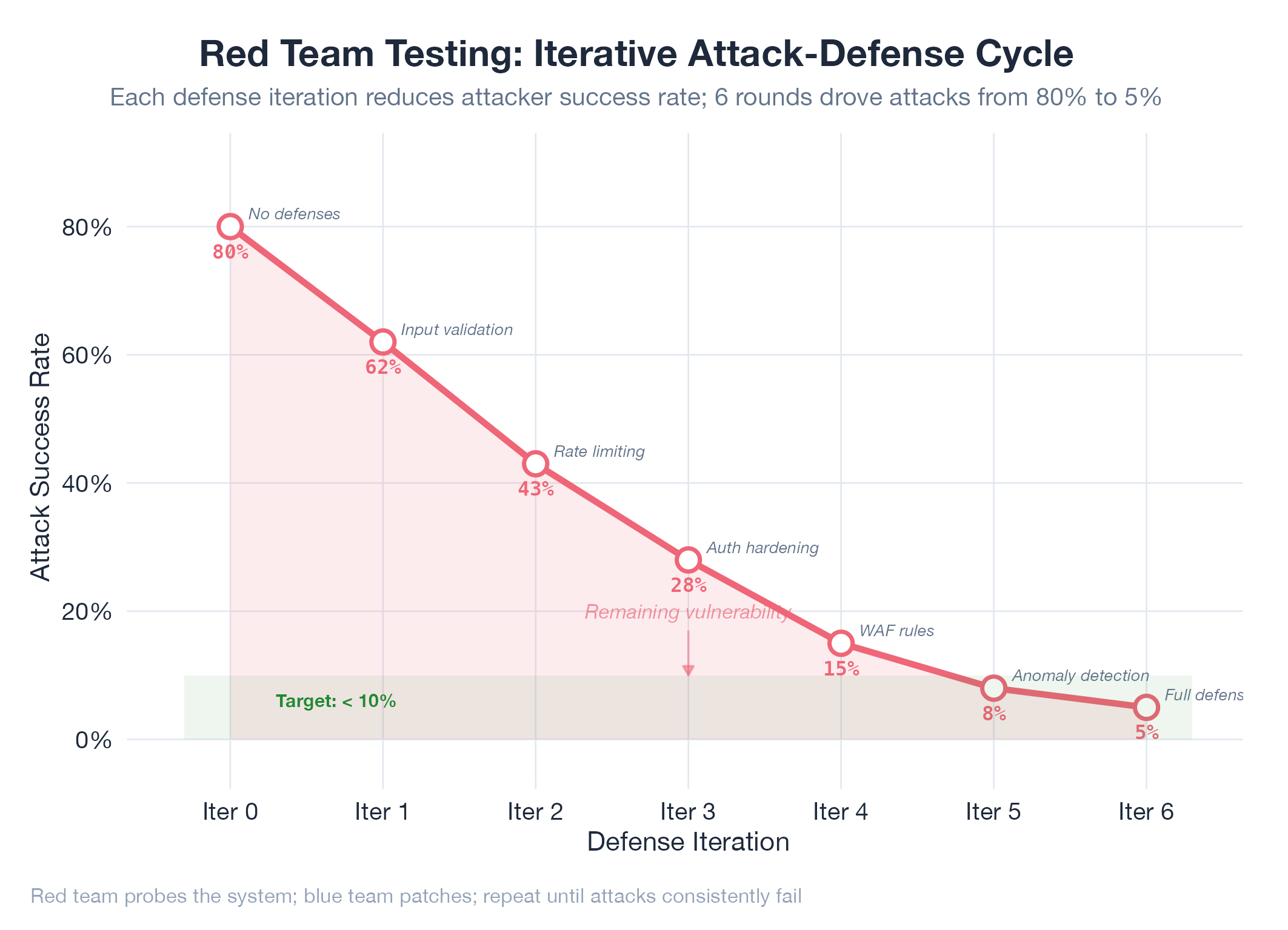

Define scope and rules of engagement → red team attacks (same tools real adversaries use) → document every vector and successful breach → remediate → retest to verify fixes didn’t introduce new weaknesses.

Example

The RedCorsair cascade attack jailbreak experiment applied red team methodology directly to AI safety: structured adversarial prompts tested whether a model could be gradually escalated past its safety boundaries through seemingly innocuous intermediate steps. The cascade approach: each prompt builds on the last: mirrors how real adversaries operate: patient, incremental boundary-pushing rather than a single dramatic attack.