Overfitting

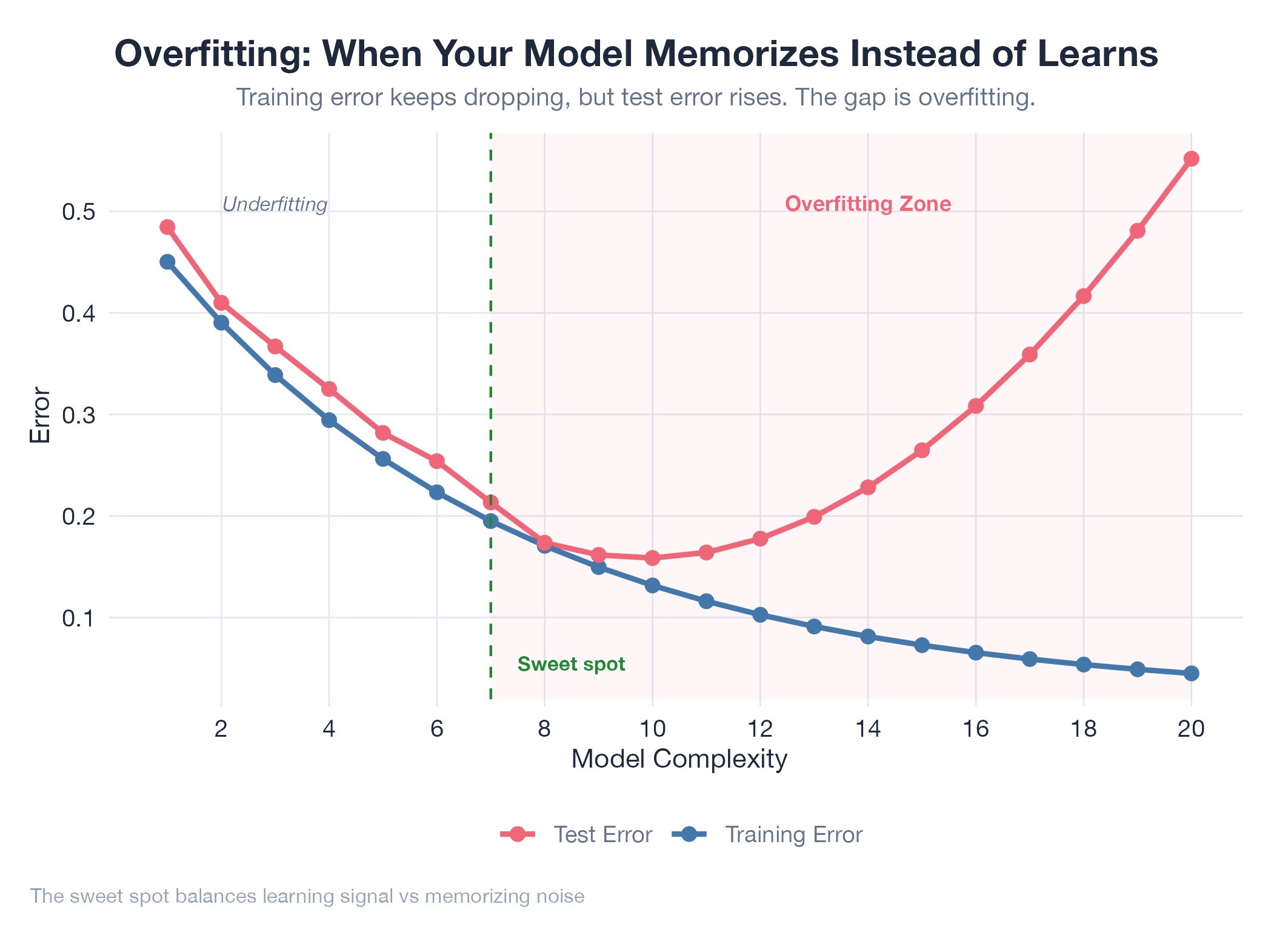

When a model memorizes training data instead of learning the pattern. Perfect in class, fails the exam.

Overfitting happens when a model learns the noise and quirks of its training data so precisely that it can’t handle anything new. Every dataset contains signal (the real pattern) and noise (random variation). A good model captures the signal. An overfit model captures both : producing near-perfect training performance but poor results on new data. The telltale sign: training accuracy is great but test accuracy is poor. Common fixes: hold-out test data, cross-validation, regularization, and early stopping.

How It Works

The more parameters a model has relative to training examples, the more room it has to memorize. The sweet spot between too-simple (underfitting) and too-complex (overfitting) is found by evaluating on data the model never saw during training.

Example

The oil model hit overfitting when parameter sweeps were evaluated only on the training window. Configs that scored perfectly on 90 days of WTI data degraded on new data. The ratchet framework fixed this by always validating winners on a held-out forward window before locking in gains.