Benchmark Comparison

Measure your system against a known standard. Not 'is it good?' but 'is it better than the alternative?'

Measure your system against a known standard. Not 'is it good?' but 'is it better than the alternative?'

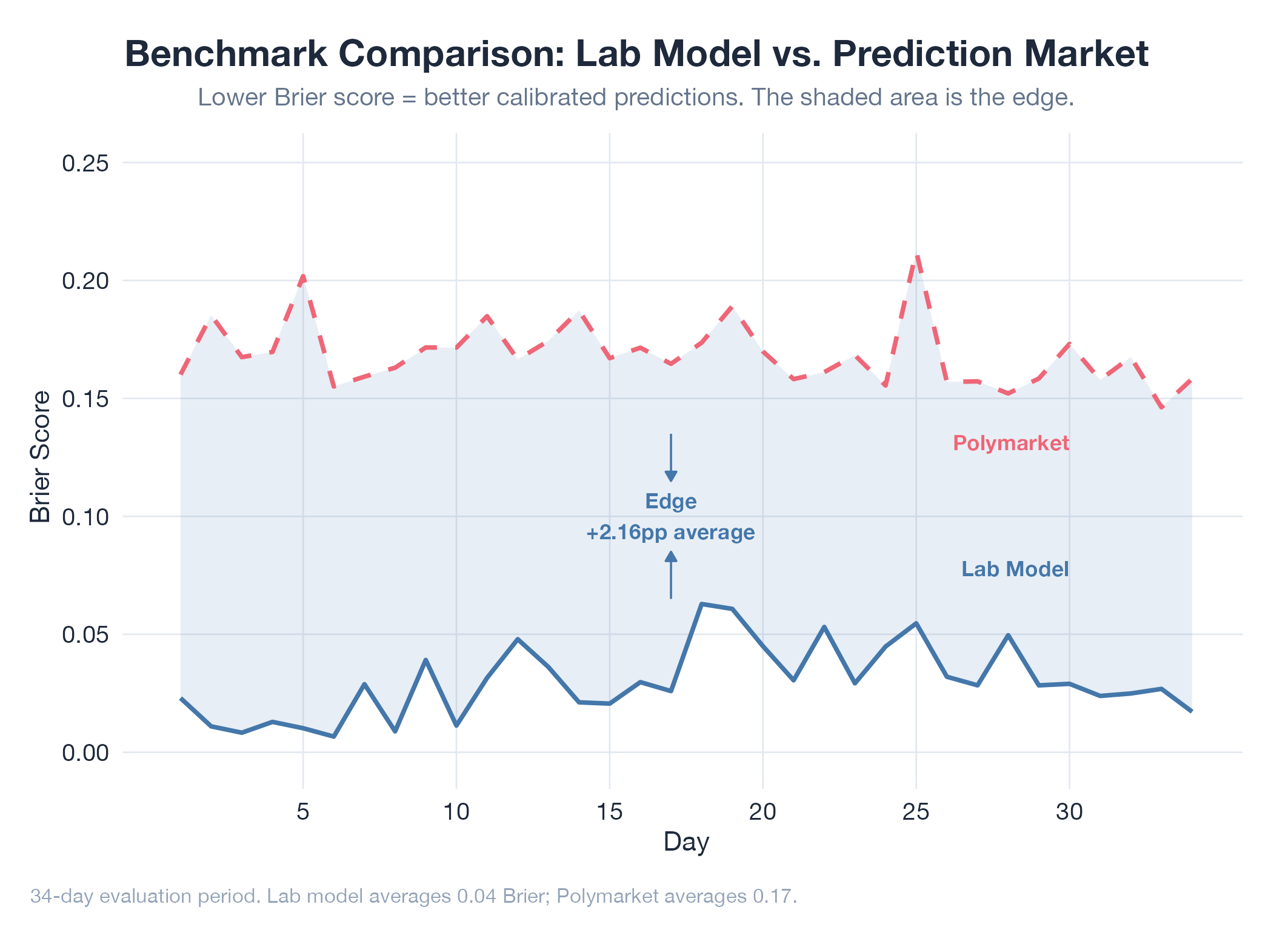

A benchmark comparison measures your system against a known external standard: not “did the metric go up?” (that’s a ratchet) but “how does my system perform relative to the best available alternative?” This forces honesty. A model with 90% accuracy sounds great until the industry standard is 95%. Three requirements for rigor: a shared metric (both systems measured the same way), a fair evaluation set (data neither system was tuned on), and enough observations to distinguish genuine differences from noise via statistical significance.

How It Works

Choose an external benchmark (competing product, published result, prediction market) → evaluate both on identical data with identical metrics → test whether the difference is statistically real. Avoid cherry-picking benchmarks where you look good and ignoring ones where you don’t.

Example

The oil model uses Polymarket’s implied probabilities as its benchmark: same events, same time windows, same Brier score metric. The v17 model showed a +2.16pp edge. Beating a liquid prediction market with thousands of real-money traders means the model extracts signal the entire market is missing. Documented in Oil v17 Realtime Consensus.