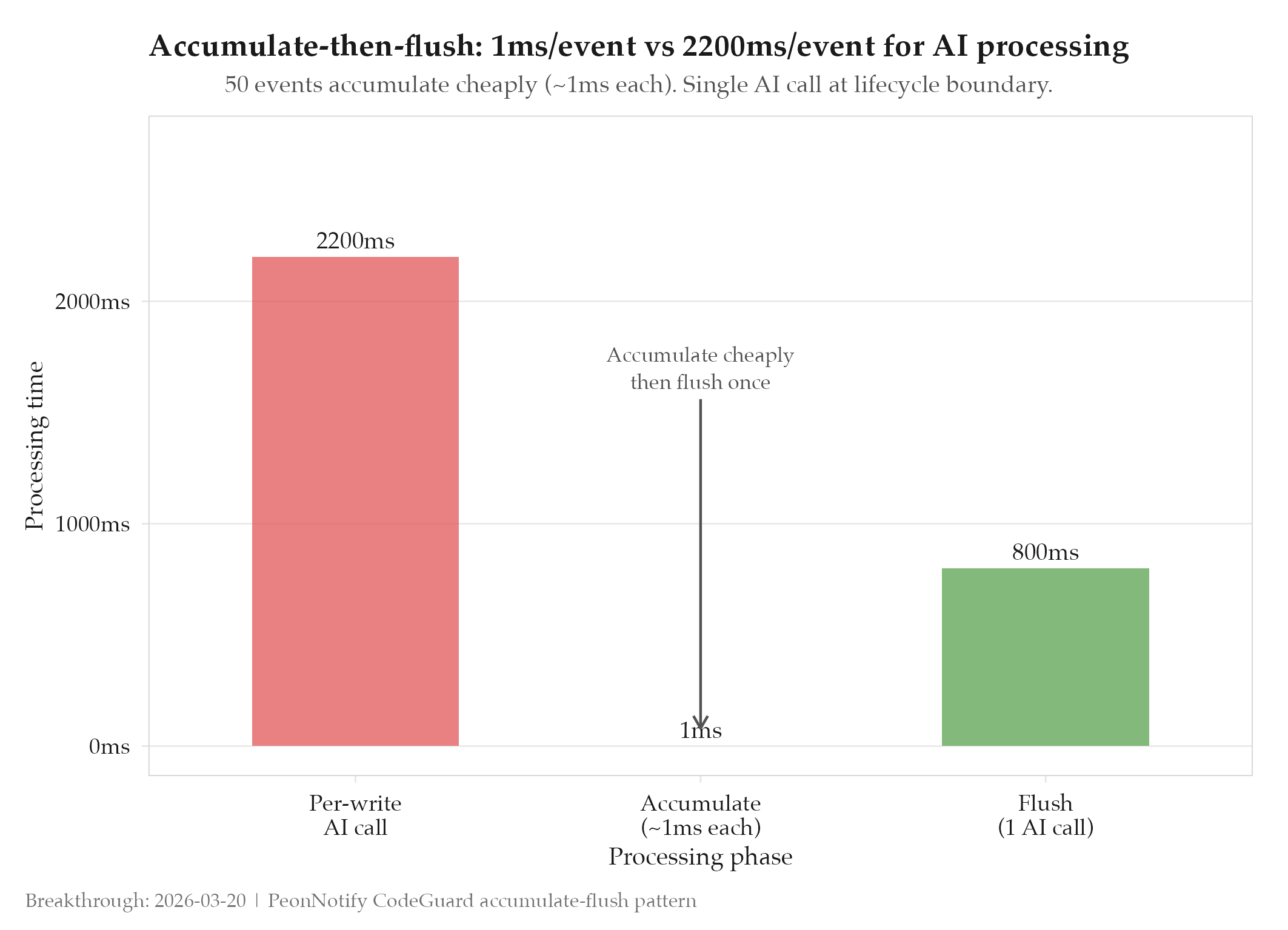

CodeGuard accumulate-then-flush: ~1ms per event, single AI call at boundary

Per-write AI review was killing 76% of sessions via timeout. The accumulate-then-flush pattern cut that to zero : and the fix became a reusable skill applicable to any event-driven AI processing problem.

Context

CodeGuard started as a quality enforcement hook: fire an AI review on every file write, catch issues before they accumulate. The hypothesis was sound. The implementation had a fatal flaw: each write triggered a synchronous AI call. AI calls take 1-5 seconds. Interactive coding sessions produce dozens of writes per minute. The math doesn’t work. Before the fix, 76% of sessions hit the timeout gate and were killed by the hook : the cure was worse than the disease.

The secondary problem was correction loops: after CodeGuard fixed an issue, writing the fix triggered another review, which found a new issue, which triggered another write, which triggered another review. The loop had no natural termination condition.

What Changed

The accumulate-then-flush pattern inverts the timing model. Instead of processing each event immediately, the hook collects file changes in a lightweight accumulator (~1ms per event : just appending to a list). The AI call happens once, at a lifecycle boundary: when the session ends or a significant milestone is reached. The full set of changes is processed in a single batch call.

Content-hash deduplication breaks the correction loop: the accumulator records the content hash of each file at write time. If a subsequent write produces the same content hash, it’s a no-op. The hook never sees its own fixes as new events.

This pattern was extracted as skills/accumulate-then-flush, a reusable skill applicable to any system where per-event processing is expensive but batch processing at lifecycle boundaries is acceptable.

Impact

Before: per-write AI processing, ~76% of sessions killed by timeout. After: accumulate-then-flush, ~1ms per event during session, single AI call at flush. Zero session kills from the hook. Content-hash dedup prevents correction loops.

The 76% session kill rate was confirmed by the experiment : the original hypothesis (per-write AI review is feasible) was partially refuted, which made the finding more valuable: the correct architecture is documented with evidence of why the naive approach fails.