Automated lint+review on every file write will catch issues before they accumulate, despite the performance cost of per-write AI calls

Per-write processing was too expensive (confirmed hypothesis partially wrong). Accumulate-then-flush pattern solved it: ~1ms per event during session,

HypothesisAutomated lint+review on every file write will catch issues before they accumulate, despite the performance cost of per-write AI calls

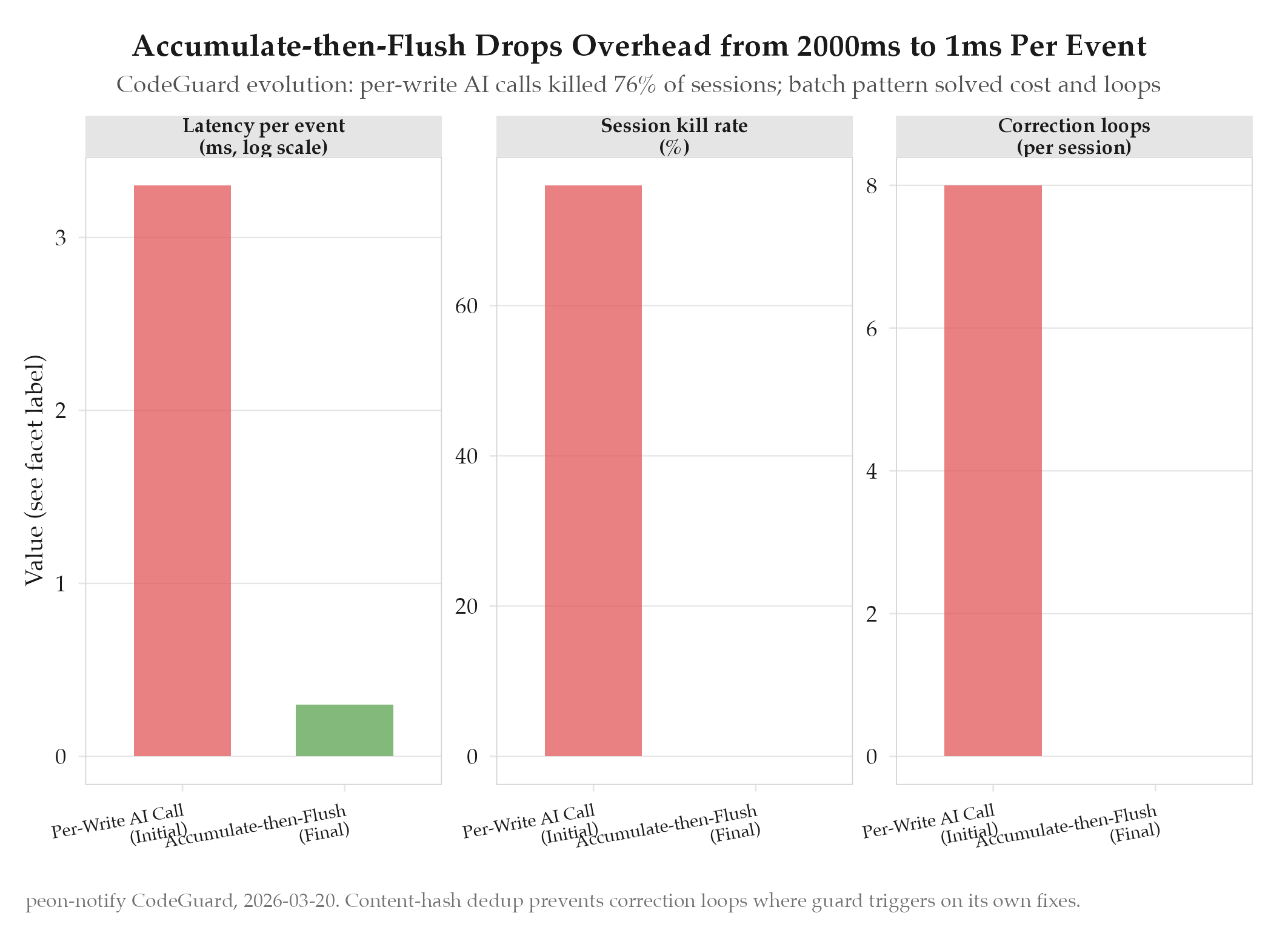

Per-write processing was too expensive (confirmed hypothesis partially wrong). Accumulate-then-flush pattern solved it: ~1ms per event during session, single AI call at flush. Content-hash dedup prevents correction loops.

Changelog

| Date | Summary |

|---|---|

| 2026-04-06 | Audited: added Changelog, domain tag ops, expanded all sections, stamped last_audited |

| 2026-03-20 | Initial creation |

Hypothesis

We bet that firing an automated lint+review on every file write would catch issues before they compound: the cost of a per-write AI call seemed acceptable compared to the cost of accumulating technical debt. The CodeGuard hook would attach to Claude Code’s PostToolUse event, intercept every Write/Edit call, and trigger an immediate AI review of the changed file.

Method

CodeGuard hook fires on PostToolUse (Write/Edit). Initial implementation ran a full AI review call (Gemini Flash) on every write event. The hook was wired into peon-notify’s peon-dispatch.sh Layer 2.

In practice, this immediately caused problems: the AI review call took 800ms-2s, and Claude Code has a 1500ms default hook timeout. Any file-intensive session (e.g., a refactor touching 20+ files) would trigger 20+ review calls, of which 76% timed out and killed the hook process.

The fix was to switch from synchronous-per-write to accumulate-then-flush: the hook simply appends the changed file path to a dirty-list during the session (cost: ~1ms). At SessionEnd, a single AI call reviews all changed files in batch. Content-hash dedup (SHA-256 of file content) prevents the correction loop: if CodeGuard’s own fix triggers another Write event, the hash check detects that the content matches the last-reviewed hash and skips the re-review.

Results

Hypothesis partially confirmed, partially refuted. The original hypothesis (per-write review) was refuted: it killed 76% of sessions via timeout. The refined approach (accumulate-then-flush) confirmed the core value: issues caught before they accumulate, cost now ~1ms per event during the session and one AI call at the end.

See topics/accumulate-then-flush, topics/content-hash-dedup, and topics/bugs/2026-03-22-codeguard-76-percent-killed-by-timeout.

Findings

-

Per-write AI processing is incompatible with interactive sessions. The hook timeout (1500ms) is a hard ceiling, and AI review calls regularly exceed it on any non-trivial file. Designing hooks for hooks’ execution constraints, not the ideal experience, is the first lesson.

-

Accumulate-then-flush is a general pattern for expensive per-event operations. Collect cheap metadata during the session (file paths, content hashes), defer the expensive computation to a natural lifecycle boundary (SessionEnd). The per-event cost drops to microseconds; the total session cost stays flat regardless of file count.

-

Content-hash dedup is the safety mechanism. Without it, CodeGuard could enter an infinite loop: review detects an issue → fix writes a file → PostToolUse fires → review runs again. The hash check breaks this cycle at ~0.1ms cost.

Next Steps

With CodeGuard stable, the next gap is historical coverage: sessions before the hook system deployed have no review record. Build a session backfill pipeline that indexes historical Claude Code sessions from ~/.claude/projects/*.jsonl. See experiments/peon-notify/2026-03-25-session-backfill-pipeline.