Multi-Persona Audit

Three or more expert reviewers with different lenses audit the same system. Finds every blind spot.

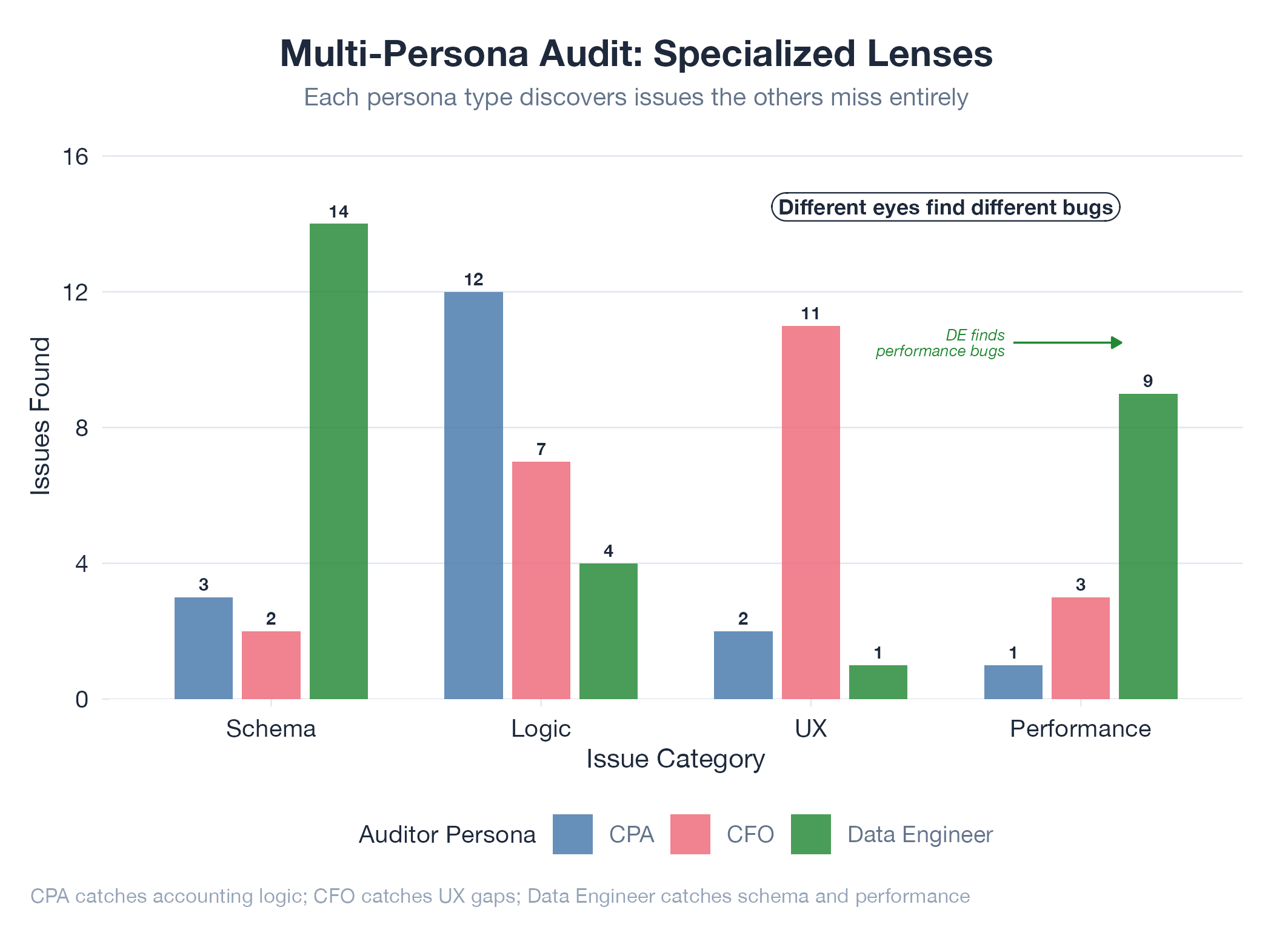

A multi-persona audit applies three or more independent reviewers : each with distinct domain expertise : to the same system simultaneously. A CPA finds financial accuracy issues. A data engineer finds schema violations. A CFO finds misleading metric definitions. Each persona reviews independently (no anchoring bias from seeing others’ findings first), then all findings are cross-referenced. Convergent findings (flagged by multiple personas) are highest priority; divergent findings are domain-specific. The method’s power: cross-pollination reveals issues no single reviewer can see : the CPA doesn’t know the calculation error is a pipeline bug, the engineer doesn’t know the correct pipeline produces a misleading metric.

How It Works

Define personas with distinct expertise and evaluation criteria → audit independently → score findings (critical/major/minor) → merge and cross-reference → prioritize by severity × frequency across personas.

Example

Quick-Fin’s 30-iteration multi-persona audit (CPA, data engineer, CFO) found 23 calculation issues, 18 schema violations, and 11 ambiguous metrics. Only 4 issues were flagged by all three : but those 4 affected reporting accuracy, pipeline reliability, and executive decision-making simultaneously. Most critical issues lived at the intersection. Architecture at Quick-Fin.