Circular Knowledge Corruption

What Happened

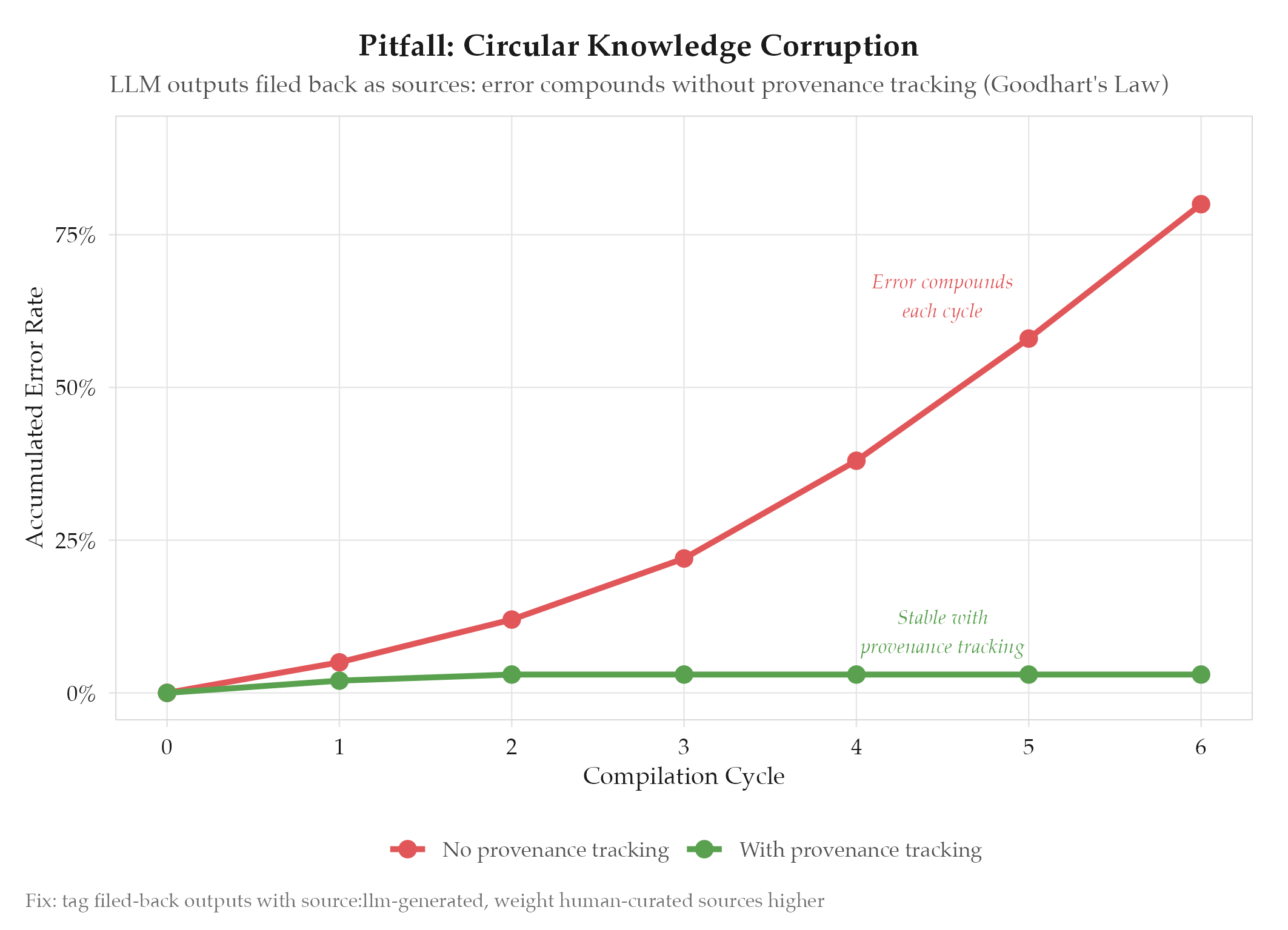

In the LLM Knowledge Base pattern’s compounding loop (output filed back as raw, then recompiled), errors in LLM-generated articles get re-ingested as authoritative source material. Each compilation cycle amplifies the original error because the system cannot distinguish between:

- Human-curated raw sources (high authority, externally verified)

- LLM-generated outputs filed back (lower authority, potentially erroneous)

Over multiple compilation cycles, a small factual error grows into an established “fact,” self-reinforced by multiple articles that all trace back to the same erroneous LLM output. This is the knowledge-management equivalent of training an LLM on its own outputs: model collapse through self-reinforcement.

Root Cause

See definitions/root-cause-analysis for the analytical framework. Specific cause: the compounding loop lacks provenance tracking. Filed-back LLM outputs enter the raw/ directory with the same authority as human-curated sources. The compilation step weights all raw sources equally, giving LLM-generated content the same trust as verified external content.

How to Avoid

- Provenance tagging: tag all filed-back outputs with

source: llm-generatedand a timestamp. Weight human-curated sources higher at compile time - Validation gate: add a verification step before filing outputs back to

raw/: cross-check claims against external sources - Periodic human review: randomly sample compiled articles and verify factual accuracy against original sources

- Hash-based drift detection: track article content hashes across compilations. Flag articles that drift significantly between cycles for manual review

- Generation depth tracking: track how many compilation cycles each fact has survived. Facts supported only by other LLM-generated facts (no external anchor) get flagged

- Periodic full recompilation: occasionally recompile the entire wiki from original raw sources only (excluding filed-back outputs) to detect accumulated drift

Provenance tracking is not optional. It is the difference between a virtuous cycle and a vicious one.

Related

- research/2026-04-02-karpathy-llm-knowledge-base-pattern : primary source

- topics/self-improving-agent-patterns : universal anti-pattern: feedback corruption

- ideas/2026-04-02-vault-as-llm-knowledge-base : applying the pattern to this vault

- topics/pitfalls/rubric-overfitting : related: both are cases where the system’s own outputs corrupt its evaluation