Removing undated events produces more honest quality scores

2026-03-11

Signal

Removing events without dates from the pipeline before scoring them: rather than including them and flagging low quality: is a scope definition decision: it changes what the audit measures from “all events” to “datable events,” which produces more honest and more actionable quality scores.

Evidence

- Project: projects/jobs-apply/_index: Run performance summary; reviewed last 3 run results and assessed overall pipeline health

- Project: internal audit: Removed events missing dates from the pipeline; reviewed all events and articles for client-deliverable alignment

- Decision rationale: Events without dates can’t be evaluated for temporal relevance, freshness, or scheduling accuracy: including them artificially deflates every temporal metric

- Downstream impact: Changes the denominator of the quality calculation: a 0.85 score across 1,200 datable events is a different claim than a 0.60 score across 1,500 events where 300 were unevaluatable

- Strategic: Began consolidation discussion between internal audit pipeline and internal-sync-server projects

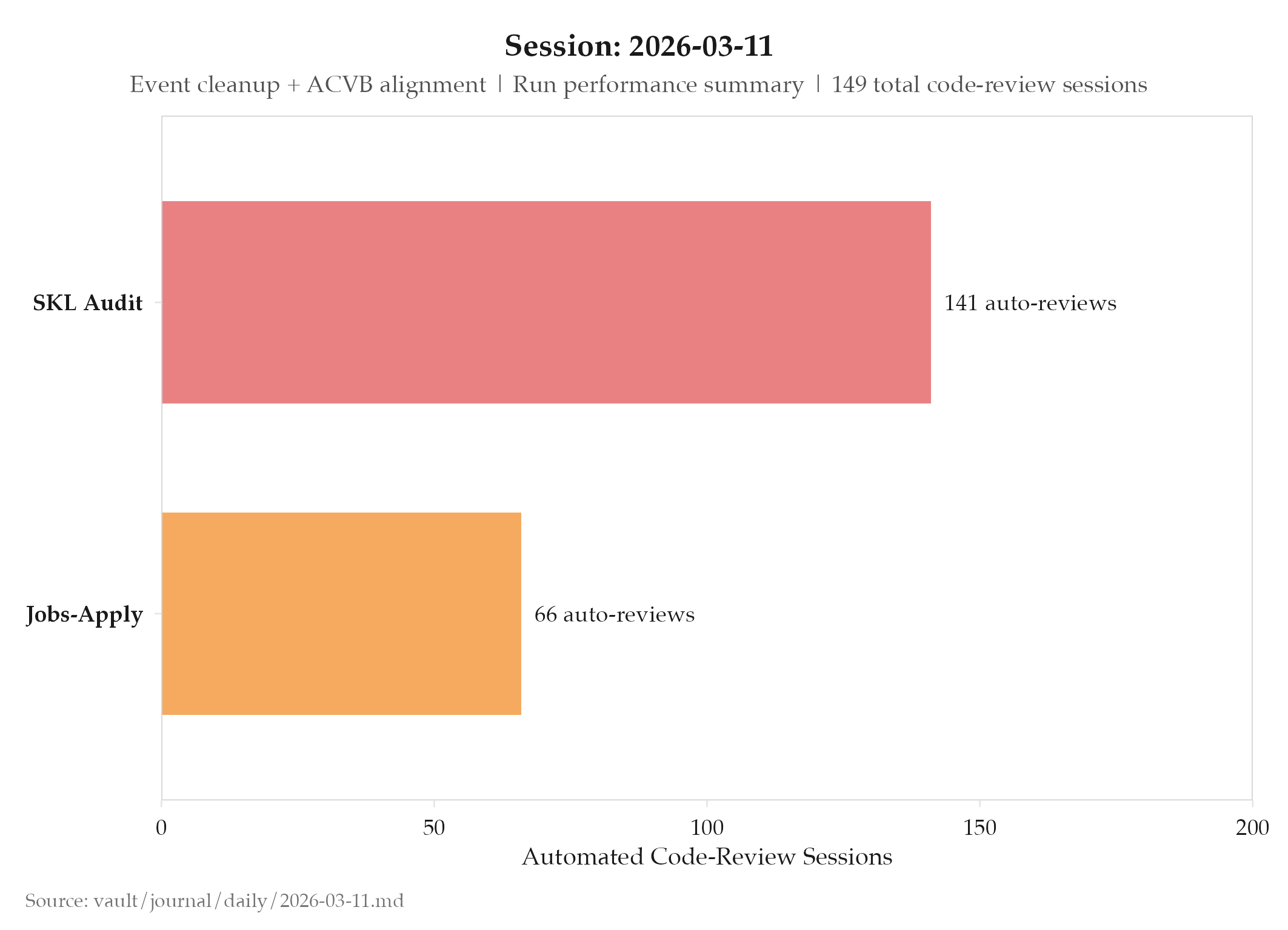

- Volume: 149 automated code-review sessions (internal audit pipeline: 75, autohunt: 66)

So What (Why Should You Care)

When you audit data quality, what you exclude from the audit is as important as what you include. Events without dates aren’t “low quality” records: they’re incomplete records that fall outside the scope of temporal quality evaluation. Scoring them as zero-quality events would drag down every temporal metric for reasons unrelated to the quality of the datable events in the system.

This is the same principle behind well-defined denominators in any metric. A “pass rate” of 60% means very different things depending on whether the denominator includes records that couldn’t be evaluated or only records that were fully evaluated. In quality systems, imprecise denominators create imprecise baselines, which makes improvement tracking unreliable.

The client-deliverable alignment review today also demonstrates the value of periodic manual review passes. Automated quality scores catch systematic patterns, but a human review of all events against client-deliverable’s specific alignment requirements catches the cases where the automation’s judgment diverges from the actual business requirements. Both views are necessary; neither replaces the other.

The internal audit pipeline and internal-sync-server consolidation discussion that began today reflects a common architectural question when two projects share 80% of their domain: is the separation providing value, or is it just creating coordination overhead? Beginning this discussion is itself a quality decision: acknowledging the coordination cost before it compounds.

The run performance summary for projects/jobs-apply/_index demonstrates the value of reviewing the last 3 runs rather than just the most recent one. A single run can have an anomalous result: unusually high success rate on a good day, unusually low success rate due to a transient platform issue. Looking at three runs gives you a trend line. Is the success rate improving, declining, or flat? That trend is what drives the next iteration decision: fix the current approach, try a new approach, or scale the current approach.

Reviewing docs and Claude files before starting work on a familiar project is also a discipline worth noting explicitly. Even when you wrote the code last week, the state of a rapidly-changing project can shift significantly in a few days. The Claude file captures the current context that wasn’t obvious from the code alone. Reading it before starting prevents work that duplicates something already done or contradicts a decision already made.

149 automated code-review sessions split roughly evenly between the two projects (internal audit pipeline: 75, autohunt: 66) suggests similar levels of active development across both codebases on this day: a useful proxy for development activity when git commit logs aren’t directly available.

What’s Next

- Finalize internal audit pipeline and internal-sync-server consolidation decision

- Continue client-deliverable alignment review for remaining articles and events

- Validate that datable-event filter doesn’t exclude events with partial dates

Log

- projects/jobs-apply/_index: summarized current state and last 3 run results; assessed pipeline health

- Reviewed docs and Claude files for current project state

- internal audit: removed events missing dates from the pipeline

- Reviewed all events and articles for client-deliverable alignment

- Began consolidation discussion between internal audit pipeline and internal-sync-server: single-repo evaluation started

- 149 automated code-review sessions (internal audit pipeline: 75, autohunt: 66)