Lint before AI review cuts cost and improves signal

2026-03-04

Signal

Sequencing lint before AI review: and only triggering the AI step when lint passes: cut API costs while making the review signal more reliable, because AI review of already-broken code produces noise, not insight.

Evidence

- Project: projects/peon-notify/_index: CodeGuard pipeline, v0.2.0

- Architecture decision: Lint-then-review pipeline order; AI review only runs if lint passes: saves API calls on broken code

- Language coverage: 7 languages (JS/TS, Python, Shell, Go, Rust, Ruby, SQL) via file-extension-based detection

- New sounds added:

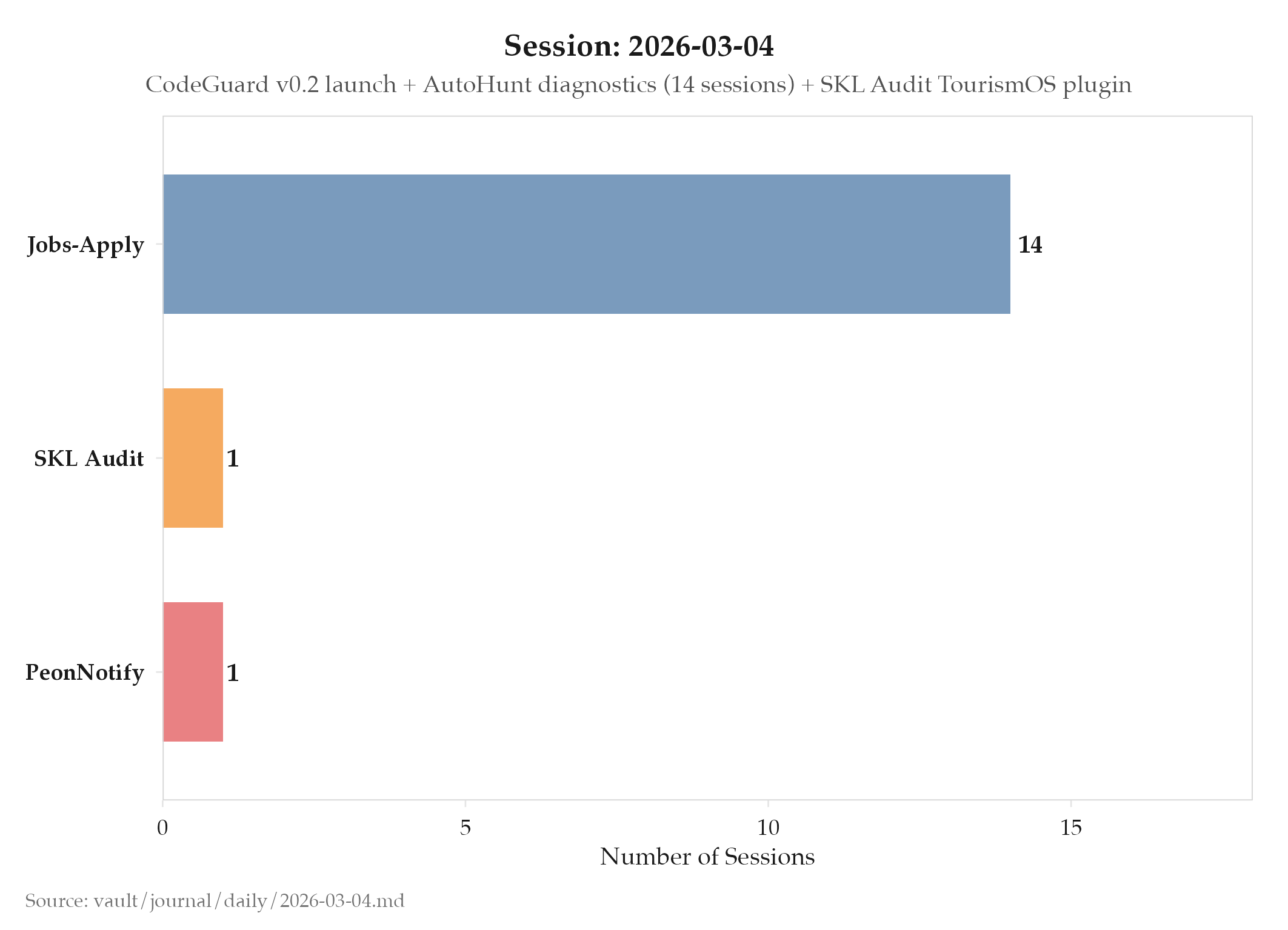

never_mind(tool failure),leave_me_alone(system error),more_gold(rate limit hit) - Project: projects/jobs-apply/_index: 14 interactive sessions; telemetry pipeline, Gmail verification, UI improvements

- Project: internal audit: Initial modular plugin architecture for internal-tourism-api auditing

So What (Why Should You Care)

The lint-before-AI-review pattern is reusable across any pipeline that combines static analysis with an expensive LLM step. The key insight: running LLM review on syntactically broken code is wasteful and produces low-quality output because the model spends its context window explaining obvious syntax errors rather than finding semantic bugs. By gating the expensive step behind the cheap one, you get better review quality AND lower costs simultaneously.

This is the same principle behind any tiered validation system: fail fast on cheap checks before investing in expensive ones. A database that runs integrity checks in-memory before writing to disk follows the same pattern. A compiler that runs syntax checks before type-checking follows it too. The cheap check acts as a filter that ensures the expensive check only sees input it’s actually equipped to evaluate well.

The language-specific prompts for all seven languages matter for a different reason. A generic “review this code” prompt produces generic advice. A Python-specific prompt can reference Python idioms, common misuse patterns (mutable default arguments, bare except clauses), and the specific quality bar for Python code. Language-specific prompts produce higher-signal reviews because they bring the relevant domain knowledge to the evaluation.

The three new sound categories added today also reflect a design maturity point: projects/peon-notify/_index v0.2.0 moved from “sounds for generic events” to “sounds for specific failure modes.” never_mind (tool failure) communicates something went wrong but recovery is expected. leave_me_alone (system error) communicates something went wrong at a lower level that needs attention. more_gold (rate limit hit) communicates an external constraint, not an internal failure. This semantic differentiation at the audio layer mirrors the semantic differentiation at the code layer: the same design principle applied in both domains.

The 14 interactive sessions on projects/jobs-apply/_index (telemetry, Gmail verification, UI improvements) running in parallel with projects/peon-notify/_index v0.2.0 development demonstrates the value of internal audit’s modular plugin architecture being built simultaneously: all three projects were advancing independently on the same day, each one a separate concern that didn’t block the others.

What’s Next

- Expand CodeGuard language coverage beyond the initial 7

- Validate two-step pipeline reliability under high-frequency file writes

Log

- Built CodeGuard in projects/peon-notify/_index v0.2.0: automatic lint + AI debug review on every file write

- Language-aware linting for 7 languages (JS/TS, Python, Shell, Go, Rust, Ruby, SQL) via extension detection

claude -pAI review step with language-specific prompts- Added separate error sounds:

never_mind(tool failure),leave_me_alone(system error),more_gold(rate limits) - Two-step pipeline: lint first, AI review only if lint passes

- projects/jobs-apply/_index: 14 interactive sessions: auto-apply worker diagnostics, telemetry pipeline, Gmail verification integration

- Renamed “Hunt Mode” to “Manually Approve” / “Auto Apply” for UI clarity

- Added Stop Workers from Dashboard + headless default mode

- Dim inactive workers + sort active left in activity display

- internal audit: Initial implementation of modular plugin architecture for internal-tourism-api auditing