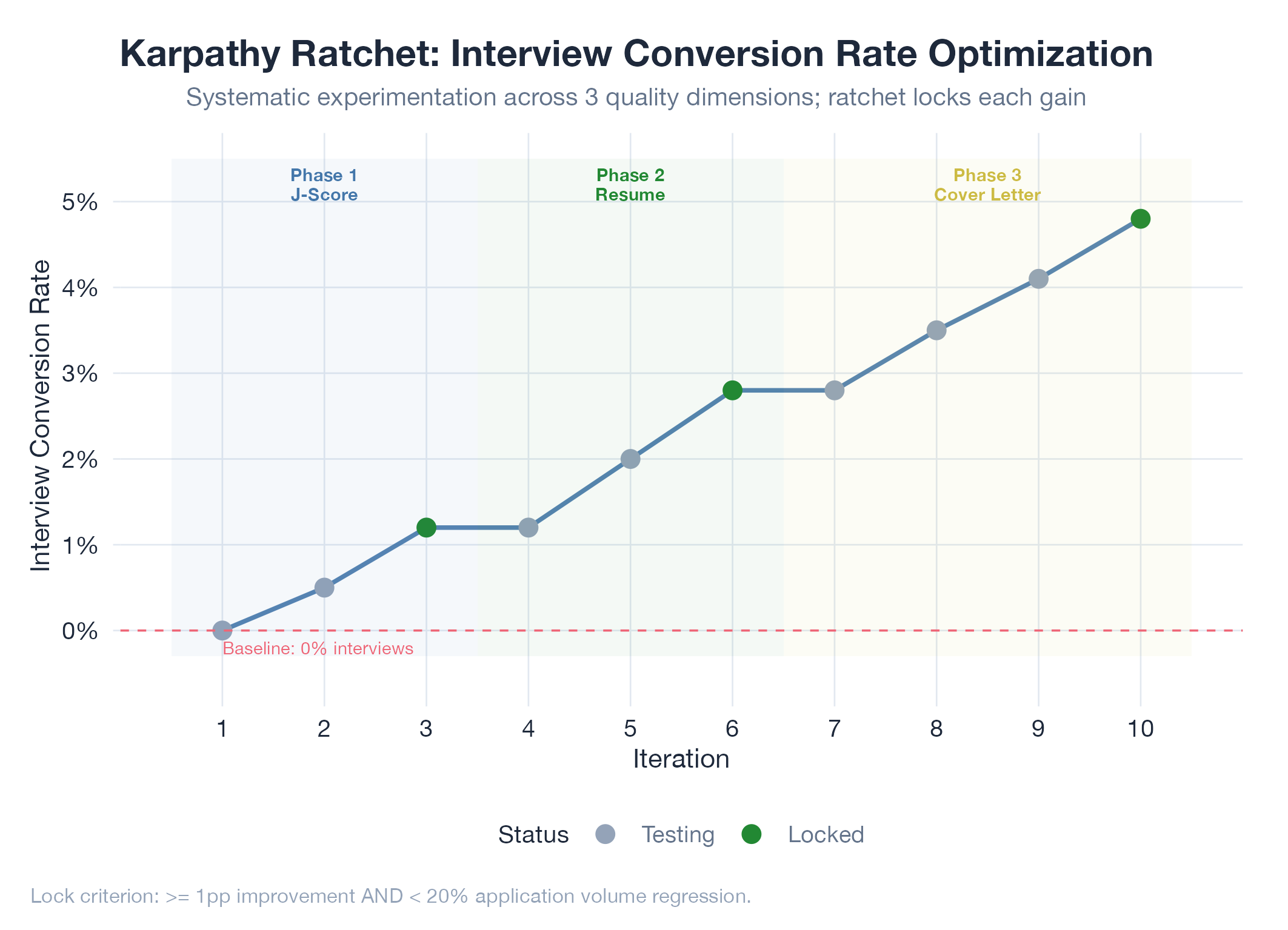

Applying the Karpathy ratchet methodology to jobs-apply's interview conversion rate (currently ~0%) will identify at least 3 lockable configuration improvements within 10 iterations

HypothesisApplying the Karpathy ratchet methodology to jobs-apply's interview conversion rate (currently ~0%) will identify at least 3 lockable configuration improvements within 10 iterations

Changelog

| Date | Summary |

|---|---|

| 2026-04-06 | Audited: last_audited stamped |

| 2026-04-04 | Initial creation |

Hypothesis

We bet that the Karpathy ratchet methodology : a tight loop of measure, hypothesize, implement, re-measure, lock gains : can drive interview conversion rate the same way it has driven quality metrics in other systems. The current jobs-apply interview rate from LinkedIn messages is 0%: 24 conversations, 12 of which were recruiter cold outreach, 0 of which were application responses. This metric is now measurable end-to-end via the Gmail crawl pipeline.

The key insight is that the experiment counter must load from disk: results.tsv is the global state, not in-memory counters. Every iteration must read the current locked floor before proposing a new experiment.

The hypothesis is that systematic experimentation on application quality dimensions : J-Score threshold, resume tailoring aggressiveness, cover letter personalization, company targeting criteria : will produce measurable interview conversion improvements within 10 iterations, because these are the variables closest to the decision that hiring managers make.

Method

- Define metric: Interview conversion rate = interviews / total_applications. Track via Gmail crawl pipeline (already classifies interview signals).

- Baseline measurement: current rate across all channels (LinkedIn, Greenhouse, Lever, Direct, Workday). Use the CHRO audit system data.

- Initialize ratchet:

results.tsvin jobs-apply root with columns:experiment_id,config_param,value_before,value_after,metric_before,metric_after,locked - Phase 1 (experiments 1-3): Vary J-Score minimum threshold. Current: accept all scores. Test: minimum 60, 70, 80. Hypothesis: higher quality targeting produces more interviews even with fewer applications.

- Phase 2 (experiments 4-6): Vary resume tailoring. Test: generic resume vs keyword-optimized vs full rewrite per posting. Measure callback rate.

- Phase 3 (experiments 7-10): Vary cover letter strategy. Test: no cover letter vs template vs AI-personalized vs STAR-format with company-specific hooks.

- Lock criterion: if an experiment improves interview conversion by >= 1pp AND doesn’t regress application volume by > 20%, lock it as the new floor.

Results

Pending. Baseline measurement required before iteration begins. Expected timeframe: 2-3 weeks per phase (need sufficient application volume for statistical significance).

Findings

Pending.

Next Steps

If confirmed, extract the cross-project ratchet configuration as a reusable template. Apply to bloomnet (data freshness) and redcorsair (API reliability) as described in the source idea.