A/B Test

Split your audience in two, show each group a different version, and let the numbers pick the winner.

Split your audience in two, show each group a different version, and let the numbers pick the winner.

An A/B test exposes two versions of something to comparable groups simultaneously and measures which performs better. The “A” is the control (what you’re already doing); the “B” is the challenger. Random assignment distributes unknown confounders evenly, so any statistically significant difference can be attributed to the change. Critical rule: run both versions simultaneously: comparing “this week” to “last week” isn’t a test, it’s two different weeks. Requires a clear primary metric chosen before starting, and enough traffic to reach statistical significance.

How It Works

Randomly split users/sessions → run A and B simultaneously → collect enough data for significance → compare primary metric → winner becomes the new default and the next test’s control.

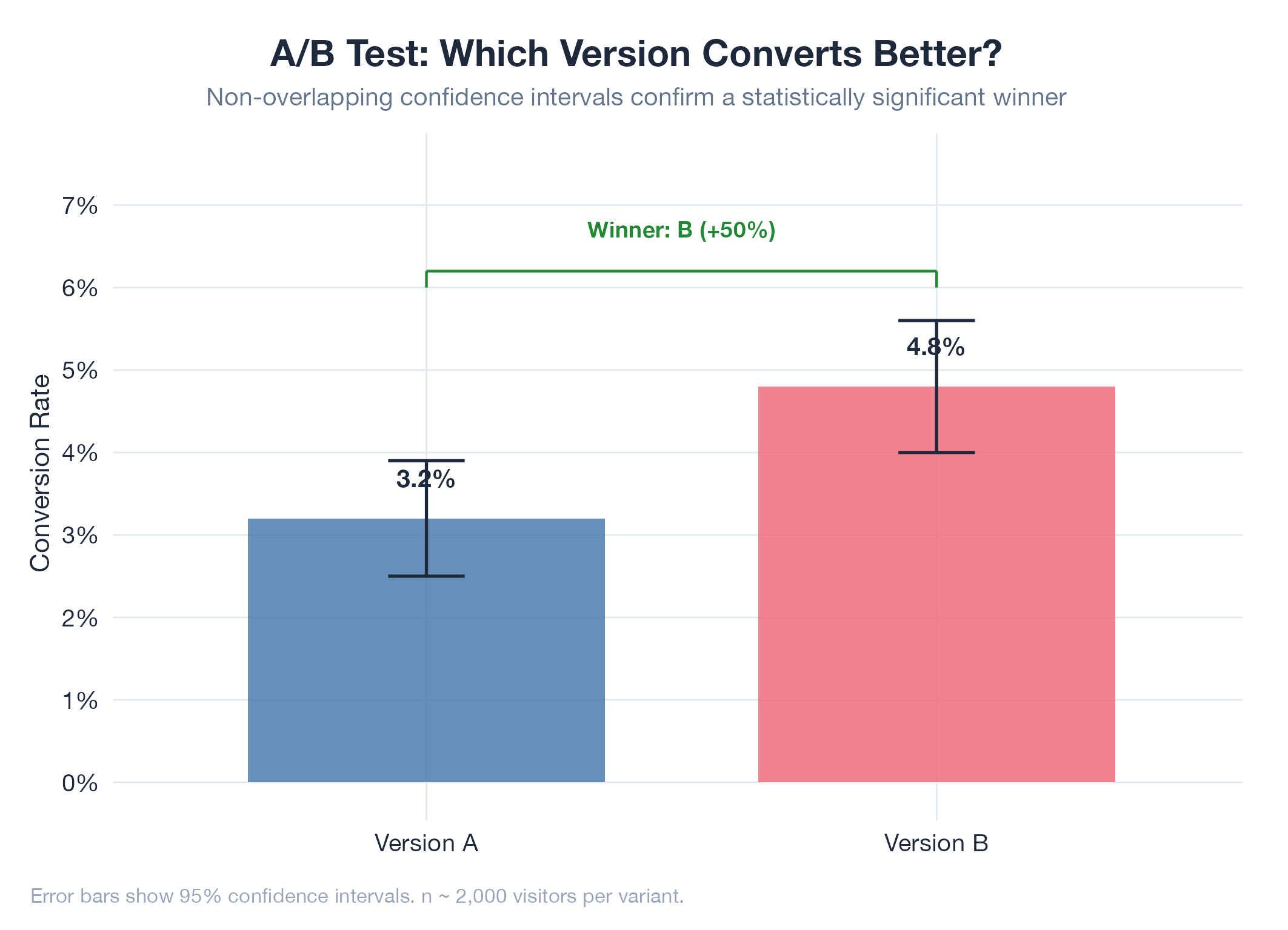

Example

Jobs-apply ran a hero-copy split test on the SaaS landing page: outcome-focused copy (“Land interviews 3x faster”) vs. feature-focused (“AI-powered job applications”). The outcome-focused variant won on signup conversion per unique visitor. The test avoided the “comparing two weeks” trap by running both simultaneously.