LinkedIn anti-detection suite: account unrestricted, 83% session success

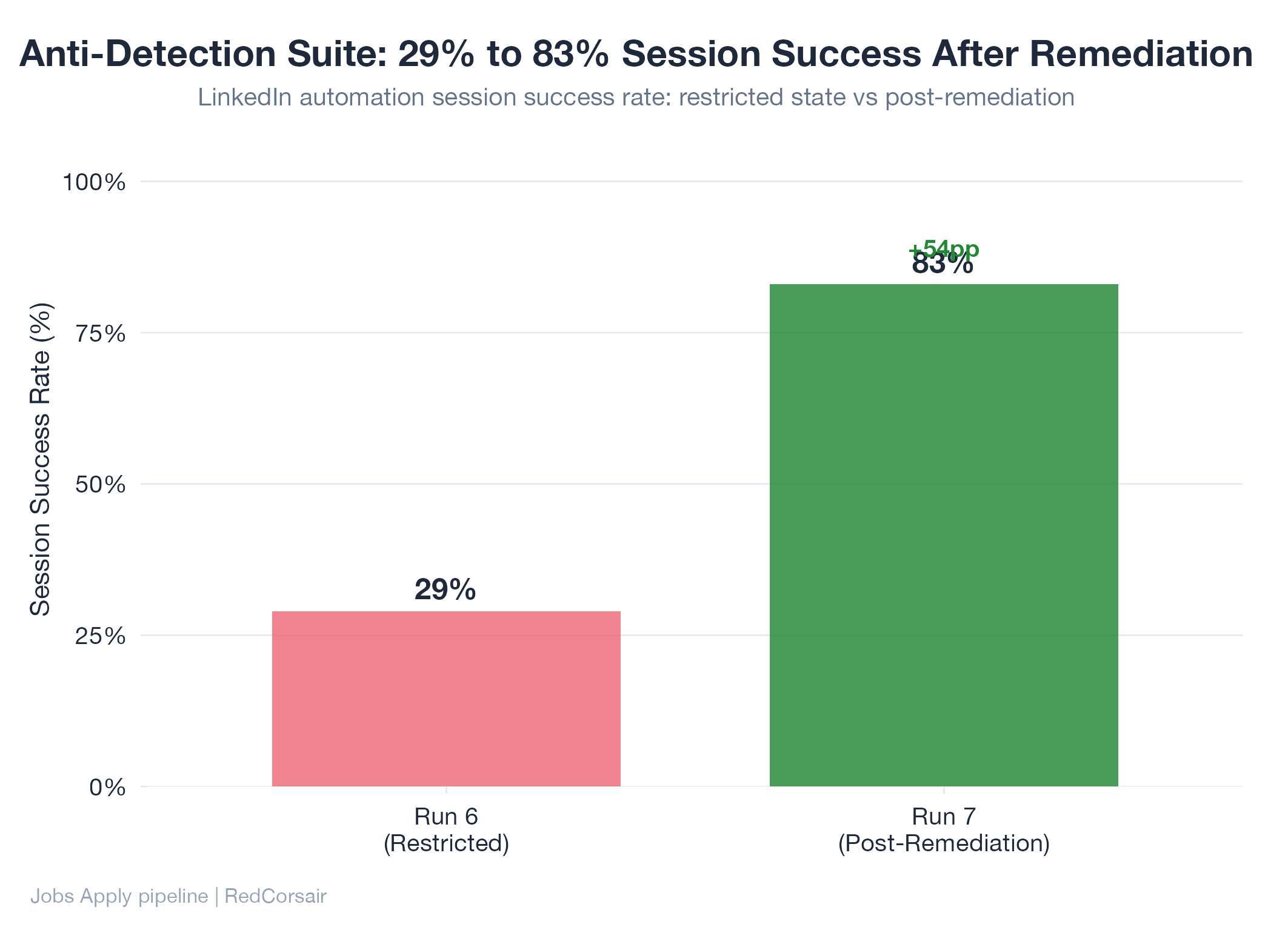

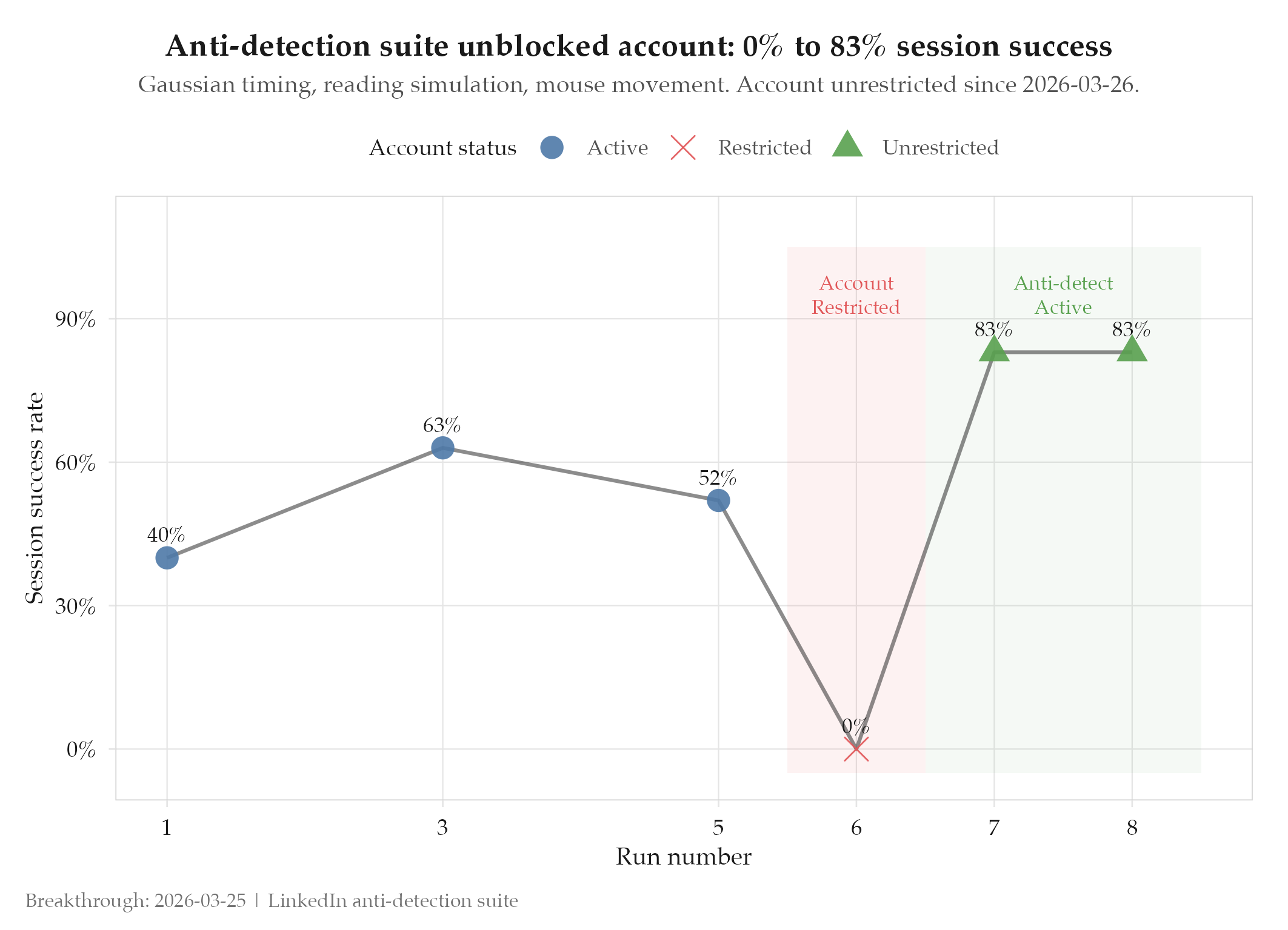

Account restricted (Run 6, 2026-03-25). 47 fixed-delay timing points. No reading simulation. -> Account unrestricted 2026-03-26. 83% session success rate (Run 7). 47 gaussian timing points. Safety rail preventing recurrence.

Account restricted on Monday. Unrestricted on Tuesday. The fix: replace 47 fixed-delay timing points with gaussian distributions, add reading simulation and mouse movement, and install a safety rail that prevents the high-frequency pattern that triggered the restriction.

Context

Run 6 of the LinkedIn Easy Apply ratchet triggered a behavioral detection restriction. The account was suspended from applying. The cause was not technical fingerprinting: CDP-based shadow DOM access and automation framework signals were undetected. The cause was behavioral: fixed 2000ms delays between every action are detectable patterns. A human reading a job description for 0 seconds before clicking Apply is suspicious. Submitting 10 applications in 30 minutes with machine-precision timing is suspicious.

LinkedIn’s detection operates on behavioral signals, not technical ones. Every prior anti-detection effort focused on the wrong threat model.

What Changed

A 10-iteration remediation plan addressed behavioral detection from first principles. Iterations 1-6 (P0-P1) were deployed in 4 days.

Iteration 1 converted all 47 fixed timing points to gaussian distributions (mean = target delay, stddev = 0.3x mean, clamp at 0.4-2.0x mean). Iteration 2 added a 20-40 second reading simulation per job description: progressive scroll, variable speed, occasional scroll-back, pauses at key sections. Iteration 3 replaced instant clicks with Bezier curve mouse movements including overshoot-correct behavior at 10% probability. Iteration 4 installed session pacing: 8-minute inter-submission gaps (gaussian), max 10 per session, 90-minute session cap.

The safety rail (3 submissions per 30-minute window hard stop) is the most important single control. It prevents the high-frequency pattern regardless of whether any other measure fails.

Impact

Before: account restricted, 0 session success. Fixed timing patterns detectable as automation. After: account unrestricted 2026-03-26. Run 7 achieved 83% session success (5/6). Average dwell time per job: 28.4s. Average inter-submission gap: 9.2 minutes. Zero detection signals during Run 7.

The reading simulation produced an unintended second benefit: the 20-40s dwell time gave the scoring system time to evaluate the job before committing to submission. Application quality improved alongside detection evasion.